-

Notifications

You must be signed in to change notification settings - Fork 1.7k

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

openshift/os: increase requested resources #29031

Conversation

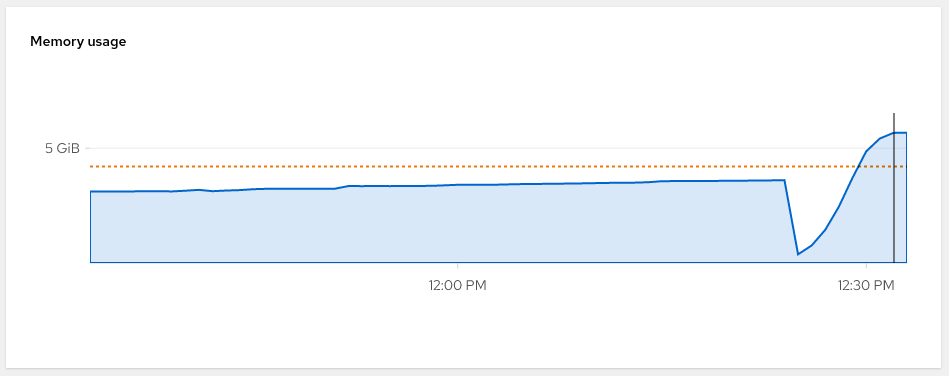

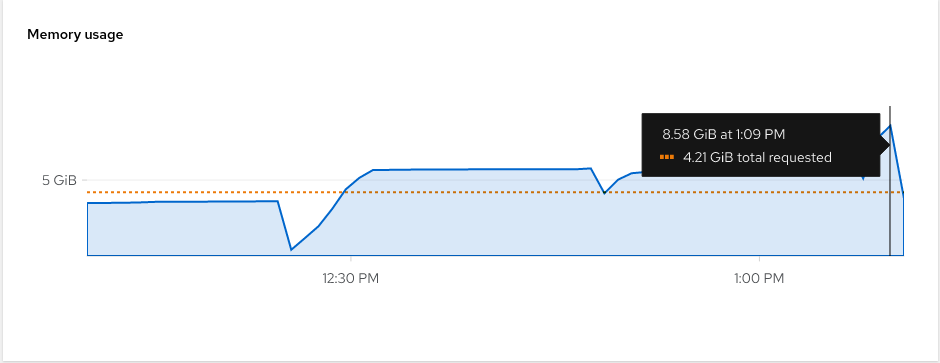

The `ostree` operations that happen as part of the `cosa-build` image build are incredibly memory hungry during the `ostree commit` and `ostree container` operations. They are moving upwards of 2G of data into memory and onto disk and vice-versa. The original resource requests were insufficient, causing the CI jobs to be incredibly slow and sometimes even timing out completely. It's been observed that the `ostree container encapsulate` operation ends up requesting nearly 6Gi of memory. This bumps both the memory requests and the CPU requests for the image builds. It should give the jobs some healthy head room to perform the operations at a reasonable pace.

|

[APPROVALNOTIFIER] This PR is APPROVED This pull-request has been approved by: miabbott The full list of commands accepted by this bot can be found here. The pull request process is described here

Needs approval from an approver in each of these files:

Approvers can indicate their approval by writing |

|

I could be convinced of bumping the CPU request back down to |

Hmm...that must be a bug somewhere. I'm not immediately seeing large heap usage here, peaking at just 3.4MB. |

To be fair, I was loosely correlating that operation with what I was seeing in the metrics dashboard, so there could be a misalignment. On this topic of resource usage, I've not been able to reproduce the drastic slowness when spinning up a I'm beginning to think there are some special conditions applied to the |

|

Well, giving the

Open to new suggestions |

|

/retest |

In openshift/release#29031 we are debugging very slow build times. Of the approximately 3h build time, 30 minutes is compressing all the files into the archive repo in `tmp/repo`. This is all essentially wasted time, because we now canonically represent the ostree commit as an ociarchive, which is re-compressed again differently. Eventually, we should drop `tmp/repo` and have `cache/repo-build` be the canonical uncompressed cache. In the short term though, ostree makes it easy to turn down the zlib compression level, which can have a dramatic impact here. Locally on my desktop: Before: ``` $ time sudo ostree --repo=tmp/repo pull-local cache/repo-build/ 988a1ffb47df4dda08df4d97d8e5f39f34c624d5c54b9c870f696203011758ef 3009 metadata, 19604 content objects imported; 1.3 GB content written ________________________________________________________ Executed in 8.33 secs fish external usr time 44.23 secs 836.00 micros 44.23 secs sys time 3.95 secs 108.00 micros 3.95 secs ``` After: ``` $ time sudo ostree --repo=tmp/repo pull-local cache/repo-build/ 988a1ffb47df4dda08df4d97d8e5f39f34c624d5c54b9c870f696203011758ef 3009 metadata, 19604 content objects imported; 1.3 GB content written ________________________________________________________ Executed in 6.09 secs fish external usr time 21.94 secs 0.00 micros 21.94 secs sys time 4.34 secs 955.00 micros 4.34 secs ``` The wall clock time isn't hugely different, but that's because my desktop is a hyperthreaded, otherwise idle i9-9900k. The actual CPU time spent is notably lower. In the Prow cluster where we're contending for CPU on slower processors, and further we are limited by cpu shares, this should help.

In openshift/release#29031 we are debugging very slow build times. Of the approximately 3h build time, 30 minutes is compressing all the files into the archive repo in `tmp/repo`. This is all essentially wasted time, because we now canonically represent the ostree commit as an ociarchive, which is re-compressed again differently. Eventually, we should drop `tmp/repo` and have `cache/repo-build` be the canonical uncompressed cache. In the short term though, ostree makes it easy to turn down the zlib compression level, which can have a dramatic impact here. Locally on my desktop: Before: ``` $ time sudo ostree --repo=tmp/repo pull-local cache/repo-build/ 988a1ffb47df4dda08df4d97d8e5f39f34c624d5c54b9c870f696203011758ef 3009 metadata, 19604 content objects imported; 1.3 GB content written ________________________________________________________ Executed in 8.33 secs fish external usr time 44.23 secs 836.00 micros 44.23 secs sys time 3.95 secs 108.00 micros 3.95 secs ``` After: ``` $ time sudo ostree --repo=tmp/repo pull-local cache/repo-build/ 988a1ffb47df4dda08df4d97d8e5f39f34c624d5c54b9c870f696203011758ef 3009 metadata, 19604 content objects imported; 1.3 GB content written ________________________________________________________ Executed in 6.09 secs fish external usr time 21.94 secs 0.00 micros 21.94 secs sys time 4.34 secs 955.00 micros 4.34 secs ``` The wall clock time isn't hugely different, but that's because my desktop is a hyperthreaded, otherwise idle i9-9900k. The actual CPU time spent is notably lower. In the Prow cluster where we're contending for CPU on slower processors, and further we are limited by cpu shares, this should help.

|

coreos/coreos-assembler#2888 landed; let's see if that improves things here /retest |

|

/retest |

|

@miabbott: The following tests failed, say

Full PR test history. Your PR dashboard. Instructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository. I understand the commands that are listed here. |

|

This isn't the fix we want; see openshift/os#839 and #29329 /close |

|

@miabbott: Closed this PR. In response to this:

Instructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository. |

The

ostreeoperations that happen as part of thecosa-buildimagebuild are incredibly memory hungry during the

ostree commitandostree containeroperations. They are moving upwards of 2G of datainto memory and onto disk and vice-versa.

The original resource requests were insufficient, causing the CI jobs

to be incredibly slow and sometimes even timing out completely. It's

been observed that the

ostree container encapsulateoperation endsup requesting nearly 6Gi of memory.

This bumps both the memory requests and the CPU requests for the image

builds. It should give the jobs some healthy head room to perform the

operations at a reasonable pace.