New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Quick post-mortem of recently implemented RAR optimizations #8432

Comments

|

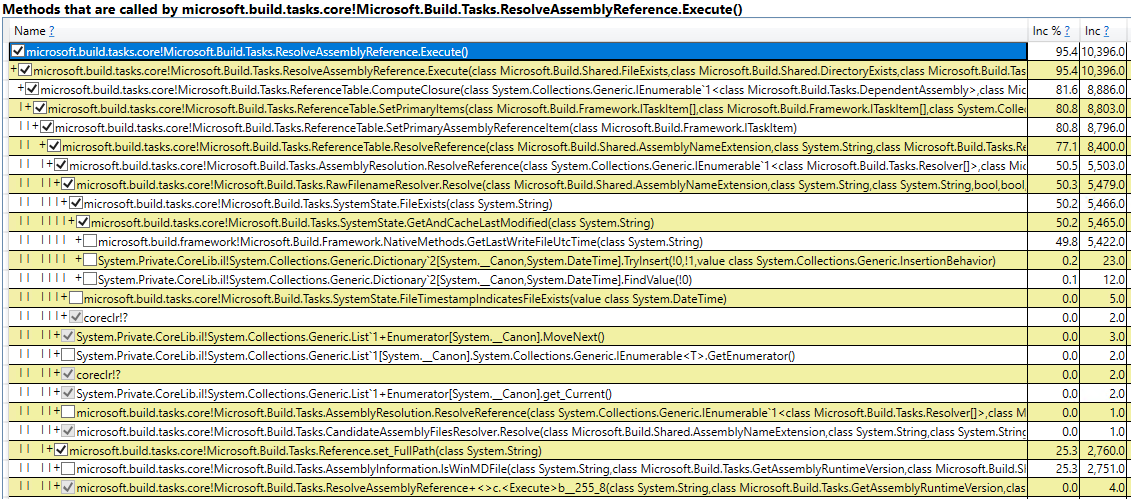

Measurements have been done with a micro-benchmark, directly executing the RAR task on a single thread in a tight loop. For each iteration the task was given parameters identical to those given when building a simple AspNetCore 7.0 project with The following table has a list of commits under src/Tasks/AssemblyDependency made from Jan 2020 to Jan 2023, that look like they could have any perf relevance. The results are rounded to the nearest half-of-percent which is about the precision at which I was able to produce the numbers in my environment.

Overall, from Jan 2020 to Jan 2023 RAR got about 20% slower (11.7 ms -> 14.3 ms per HOT invocation). |

|

@Forgind, I know that pre-cache is targeted at the cold / first build scenario so it's not expected to show here. I am curious why it's not being used, though. I see that we ship |

|

The work will continue in #8441. |

|

Related #2015 |

As I recall, we saw some promising early numbers, so I pushed through to finish the feature, but then later testing didn't find much of a benefit, so although we can use it, I'm not sure if it's actually valuable. I'm not the best at testing perf, so if you find very different results, I'd love to be wrong! If you find similar results, though, I wouldn't have a problem with removing it. I wouldn't expect there to be even 0.5% performance regression in dev loop scenarios; I feel like that loss is worse than the not-win from having a feature that doesn't really help. (We'd probably have to maintain public API, but that's fine.) Also, I'm surprised #6533 was bad. That's bad enough that I'd consider reverting it immediately and trying to figure out what went wrong afterwards. What do you think? |

The problem is on this line:

Assigning a different path to the |

|

Is it surprising that #6533 didn't trigger some perf gate/test at the time? |

I'm a little confused—looking at the diff for #6533, it didn't introduce that assignment; that assignment has existed since MSBuild moved to GitHub, at least. All 6533 did was add one new call to NormalizeFullPath and change a call from Path.GetFullPath to another NormalizePath call. Am I missing something? |

Surprising to me, at least. RAR is a substantial fraction of total build time, so it'd be a major oversight to have it not at all represented in our tests. My guess is that it regressed 39% in some scenarios—perhaps scenarios in which _isWinMDFile() is unusually expensive—but much less in another scenario, perhaps if it's already cached. (I think that's one of the things we cache in our on-disk and in-process caches.) Part of what's confusing to me is that my explanation suggests it would be very fast in exactly the scenario ladipro tested and slow in a scenario I'd expect our tests to catch...so perhaps I have something flipped? In any event, the real impact is probably less than 39%, or we'd have customers ripping us to shreds by now, but I can easily imagine it's still a substantial perf regression on average—one we should try to fix. |

|

We don't currently have good automated perf tests that would catch this. VS tests (PerfDDRITs, Speedometer) are too noisy for this to be noticed; they are focused on end-to-end VS scenarios of which RAR is a tiny piece. The .NET perf lab runs some basic command line builds where this could make a measurable difference, but I'm not sure if anyone is looking at the results anymore. We should start perf testing MSBuild more like a library. In this particular case, we know RAR is actually used as a direct library call by VS (a bit of a hack, alas) and plays an important role in design-time builds so I'd say introducing a microbenchmark measuring RAR only is justified. Similar for evaluation and a few more. Then of course builds (incremental, full) of solutions with varying size, with/without binlog, etc. And most importantly with good automated reporting. We'll get there! |

Without normalizing, the path assigned to With normalizing we introduced a new path, unknown to the cache, which makes |

|

The DT RAR calls do something a bit different, right? Like still call the RAR task but with different inputs?

Ok, but you said this was a dev inner loop scenario, right? Why didn't the normalized path get added to the cache at any point? I would've imagined it might force one more expensive operation once, but by the time we're talking about resolving the same references for the second time, it should be well aware of the normalized path and be just as fast for that part than the not-normalized path? |

This cache is currently per RAR invocation ( |

Motivation

#8422 strives to improve the performance of ResolveAssemblyReferences, which is a recurring theme. We should get a sense of how the work that's recently been done in this area moved the needle. We're specifically interested in retrospectively measuring RAR as used when building a modern .NET app.

Expected outputs

The text was updated successfully, but these errors were encountered: