diff --git a/README.md b/README.md

index 4aa7e6c882ea4..f2d65752d67a2 100644

--- a/README.md

+++ b/README.md

@@ -279,6 +279,7 @@ Current number of checkpoints: ** (from Inria/Facebook/Sorbonne) released with the paper [CamemBERT: a Tasty French Language Model](https://arxiv.org/abs/1911.03894) by Louis Martin*, Benjamin Muller*, Pedro Javier Ortiz Suárez*, Yoann Dupont, Laurent Romary, Éric Villemonte de la Clergerie, Djamé Seddah and Benoît Sagot.

1. **[CANINE](https://huggingface.co/docs/transformers/model_doc/canine)** (from Google Research) released with the paper [CANINE: Pre-training an Efficient Tokenization-Free Encoder for Language Representation](https://arxiv.org/abs/2103.06874) by Jonathan H. Clark, Dan Garrette, Iulia Turc, John Wieting.

1. **[CLIP](https://huggingface.co/docs/transformers/model_doc/clip)** (from OpenAI) released with the paper [Learning Transferable Visual Models From Natural Language Supervision](https://arxiv.org/abs/2103.00020) by Alec Radford, Jong Wook Kim, Chris Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pamela Mishkin, Jack Clark, Gretchen Krueger, Ilya Sutskever.

+1. **[CLIPSeg](https://huggingface.co/docs/transformers/main/model_doc/clipseg)** (from University of Göttingen) released with the paper [Image Segmentation Using Text and Image Prompts](https://arxiv.org/abs/2112.10003) by Timo Lüddecke and Alexander Ecker.

1. **[CodeGen](https://huggingface.co/docs/transformers/model_doc/codegen)** (from Salesforce) released with the paper [A Conversational Paradigm for Program Synthesis](https://arxiv.org/abs/2203.13474) by Erik Nijkamp, Bo Pang, Hiroaki Hayashi, Lifu Tu, Huan Wang, Yingbo Zhou, Silvio Savarese, Caiming Xiong.

1. **[Conditional DETR](https://huggingface.co/docs/transformers/model_doc/conditional_detr)** (from Microsoft Research Asia) released with the paper [Conditional DETR for Fast Training Convergence](https://arxiv.org/abs/2108.06152) by Depu Meng, Xiaokang Chen, Zejia Fan, Gang Zeng, Houqiang Li, Yuhui Yuan, Lei Sun, Jingdong Wang.

1. **[ConvBERT](https://huggingface.co/docs/transformers/model_doc/convbert)** (from YituTech) released with the paper [ConvBERT: Improving BERT with Span-based Dynamic Convolution](https://arxiv.org/abs/2008.02496) by Zihang Jiang, Weihao Yu, Daquan Zhou, Yunpeng Chen, Jiashi Feng, Shuicheng Yan.

diff --git a/README_es.md b/README_es.md

index c08ec500892d5..32156a08e2674 100644

--- a/README_es.md

+++ b/README_es.md

@@ -279,6 +279,7 @@ Número actual de puntos de control: ** (from Inria/Facebook/Sorbonne) released with the paper [CamemBERT: a Tasty French Language Model](https://arxiv.org/abs/1911.03894) by Louis Martin*, Benjamin Muller*, Pedro Javier Ortiz Suárez*, Yoann Dupont, Laurent Romary, Éric Villemonte de la Clergerie, Djamé Seddah and Benoît Sagot.

1. **[CANINE](https://huggingface.co/docs/transformers/model_doc/canine)** (from Google Research) released with the paper [CANINE: Pre-training an Efficient Tokenization-Free Encoder for Language Representation](https://arxiv.org/abs/2103.06874) by Jonathan H. Clark, Dan Garrette, Iulia Turc, John Wieting.

1. **[CLIP](https://huggingface.co/docs/transformers/model_doc/clip)** (from OpenAI) released with the paper [Learning Transferable Visual Models From Natural Language Supervision](https://arxiv.org/abs/2103.00020) by Alec Radford, Jong Wook Kim, Chris Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pamela Mishkin, Jack Clark, Gretchen Krueger, Ilya Sutskever.

+1. **[CLIPSeg](https://huggingface.co/docs/transformers/main/model_doc/clipseg)** (from University of Göttingen) released with the paper [Image Segmentation Using Text and Image Prompts](https://arxiv.org/abs/2112.10003) by Timo Lüddecke and Alexander Ecker.

1. **[CodeGen](https://huggingface.co/docs/transformers/model_doc/codegen)** (from Salesforce) released with the paper [A Conversational Paradigm for Program Synthesis](https://arxiv.org/abs/2203.13474) by Erik Nijkamp, Bo Pang, Hiroaki Hayashi, Lifu Tu, Huan Wang, Yingbo Zhou, Silvio Savarese, Caiming Xiong.

1. **[Conditional DETR](https://huggingface.co/docs/transformers/model_doc/conditional_detr)** (from Microsoft Research Asia) released with the paper [Conditional DETR for Fast Training Convergence](https://arxiv.org/abs/2108.06152) by Depu Meng, Xiaokang Chen, Zejia Fan, Gang Zeng, Houqiang Li, Yuhui Yuan, Lei Sun, Jingdong Wang.

1. **[ConvBERT](https://huggingface.co/docs/transformers/model_doc/convbert)** (from YituTech) released with the paper [ConvBERT: Improving BERT with Span-based Dynamic Convolution](https://arxiv.org/abs/2008.02496) by Zihang Jiang, Weihao Yu, Daquan Zhou, Yunpeng Chen, Jiashi Feng, Shuicheng Yan.

diff --git a/README_ja.md b/README_ja.md

index eed7d204f8368..edb49ce9d5c91 100644

--- a/README_ja.md

+++ b/README_ja.md

@@ -314,6 +314,7 @@ Flax、PyTorch、TensorFlowをcondaでインストールする方法は、それ

1. **[CamemBERT](https://huggingface.co/docs/transformers/model_doc/camembert)** (from Inria/Facebook/Sorbonne) released with the paper [CamemBERT: a Tasty French Language Model](https://arxiv.org/abs/1911.03894) by Louis Martin*, Benjamin Muller*, Pedro Javier Ortiz Suárez*, Yoann Dupont, Laurent Romary, Éric Villemonte de la Clergerie, Djamé Seddah and Benoît Sagot.

1. **[CANINE](https://huggingface.co/docs/transformers/model_doc/canine)** (from Google Research) released with the paper [CANINE: Pre-training an Efficient Tokenization-Free Encoder for Language Representation](https://arxiv.org/abs/2103.06874) by Jonathan H. Clark, Dan Garrette, Iulia Turc, John Wieting.

1. **[CLIP](https://huggingface.co/docs/transformers/model_doc/clip)** (from OpenAI) released with the paper [Learning Transferable Visual Models From Natural Language Supervision](https://arxiv.org/abs/2103.00020) by Alec Radford, Jong Wook Kim, Chris Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pamela Mishkin, Jack Clark, Gretchen Krueger, Ilya Sutskever.

+1. **[CLIPSeg](https://huggingface.co/docs/transformers/main/model_doc/clipseg)** (from University of Göttingen) released with the paper [Image Segmentation Using Text and Image Prompts](https://arxiv.org/abs/2112.10003) by Timo Lüddecke and Alexander Ecker.

1. **[CodeGen](https://huggingface.co/docs/transformers/model_doc/codegen)** (from Salesforce) released with the paper [A Conversational Paradigm for Program Synthesis](https://arxiv.org/abs/2203.13474) by Erik Nijkamp, Bo Pang, Hiroaki Hayashi, Lifu Tu, Huan Wang, Yingbo Zhou, Silvio Savarese, Caiming Xiong.

1. **[Conditional DETR](https://huggingface.co/docs/transformers/model_doc/conditional_detr)** (from Microsoft Research Asia) released with the paper [Conditional DETR for Fast Training Convergence](https://arxiv.org/abs/2108.06152) by Depu Meng, Xiaokang Chen, Zejia Fan, Gang Zeng, Houqiang Li, Yuhui Yuan, Lei Sun, Jingdong Wang.

1. **[ConvBERT](https://huggingface.co/docs/transformers/model_doc/convbert)** (from YituTech) released with the paper [ConvBERT: Improving BERT with Span-based Dynamic Convolution](https://arxiv.org/abs/2008.02496) by Zihang Jiang, Weihao Yu, Daquan Zhou, Yunpeng Chen, Jiashi Feng, Shuicheng Yan.

diff --git a/README_ko.md b/README_ko.md

index 28a2e2aa46434..33bcdda6b6193 100644

--- a/README_ko.md

+++ b/README_ko.md

@@ -229,6 +229,7 @@ Flax, PyTorch, TensorFlow 설치 페이지에서 이들을 conda로 설치하는

1. **[CamemBERT](https://huggingface.co/docs/transformers/model_doc/camembert)** (from Inria/Facebook/Sorbonne) released with the paper [CamemBERT: a Tasty French Language Model](https://arxiv.org/abs/1911.03894) by Louis Martin*, Benjamin Muller*, Pedro Javier Ortiz Suárez*, Yoann Dupont, Laurent Romary, Éric Villemonte de la Clergerie, Djamé Seddah and Benoît Sagot.

1. **[CANINE](https://huggingface.co/docs/transformers/model_doc/canine)** (from Google Research) released with the paper [CANINE: Pre-training an Efficient Tokenization-Free Encoder for Language Representation](https://arxiv.org/abs/2103.06874) by Jonathan H. Clark, Dan Garrette, Iulia Turc, John Wieting.

1. **[CLIP](https://huggingface.co/docs/transformers/model_doc/clip)** (from OpenAI) released with the paper [Learning Transferable Visual Models From Natural Language Supervision](https://arxiv.org/abs/2103.00020) by Alec Radford, Jong Wook Kim, Chris Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pamela Mishkin, Jack Clark, Gretchen Krueger, Ilya Sutskever.

+1. **[CLIPSeg](https://huggingface.co/docs/transformers/main/model_doc/clipseg)** (from University of Göttingen) released with the paper [Image Segmentation Using Text and Image Prompts](https://arxiv.org/abs/2112.10003) by Timo Lüddecke and Alexander Ecker.

1. **[CodeGen](https://huggingface.co/docs/transformers/model_doc/codegen)** (from Salesforce) released with the paper [A Conversational Paradigm for Program Synthesis](https://arxiv.org/abs/2203.13474) by Erik Nijkamp, Bo Pang, Hiroaki Hayashi, Lifu Tu, Huan Wang, Yingbo Zhou, Silvio Savarese, Caiming Xiong.

1. **[Conditional DETR](https://huggingface.co/docs/transformers/model_doc/conditional_detr)** (from Microsoft Research Asia) released with the paper [Conditional DETR for Fast Training Convergence](https://arxiv.org/abs/2108.06152) by Depu Meng, Xiaokang Chen, Zejia Fan, Gang Zeng, Houqiang Li, Yuhui Yuan, Lei Sun, Jingdong Wang.

1. **[ConvBERT](https://huggingface.co/docs/transformers/model_doc/convbert)** (from YituTech) released with the paper [ConvBERT: Improving BERT with Span-based Dynamic Convolution](https://arxiv.org/abs/2008.02496) by Zihang Jiang, Weihao Yu, Daquan Zhou, Yunpeng Chen, Jiashi Feng, Shuicheng Yan.

diff --git a/README_zh-hans.md b/README_zh-hans.md

index 7f877c2bed209..dbf8c8b8e21ef 100644

--- a/README_zh-hans.md

+++ b/README_zh-hans.md

@@ -253,6 +253,7 @@ conda install -c huggingface transformers

1. **[CamemBERT](https://huggingface.co/docs/transformers/model_doc/camembert)** (来自 Inria/Facebook/Sorbonne) 伴随论文 [CamemBERT: a Tasty French Language Model](https://arxiv.org/abs/1911.03894) 由 Louis Martin*, Benjamin Muller*, Pedro Javier Ortiz Suárez*, Yoann Dupont, Laurent Romary, Éric Villemonte de la Clergerie, Djamé Seddah and Benoît Sagot 发布。

1. **[CANINE](https://huggingface.co/docs/transformers/model_doc/canine)** (来自 Google Research) 伴随论文 [CANINE: Pre-training an Efficient Tokenization-Free Encoder for Language Representation](https://arxiv.org/abs/2103.06874) 由 Jonathan H. Clark, Dan Garrette, Iulia Turc, John Wieting 发布。

1. **[CLIP](https://huggingface.co/docs/transformers/model_doc/clip)** (来自 OpenAI) 伴随论文 [Learning Transferable Visual Models From Natural Language Supervision](https://arxiv.org/abs/2103.00020) 由 Alec Radford, Jong Wook Kim, Chris Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pamela Mishkin, Jack Clark, Gretchen Krueger, Ilya Sutskever 发布。

+1. **[CLIPSeg](https://huggingface.co/docs/transformers/main/model_doc/clipseg)** (来自 University of Göttingen) 伴随论文 [Image Segmentation Using Text and Image Prompts](https://arxiv.org/abs/2112.10003) 由 Timo Lüddecke and Alexander Ecker 发布。

1. **[CodeGen](https://huggingface.co/docs/transformers/model_doc/codegen)** (来自 Salesforce) 伴随论文 [A Conversational Paradigm for Program Synthesis](https://arxiv.org/abs/2203.13474) 由 Erik Nijkamp, Bo Pang, Hiroaki Hayashi, Lifu Tu, Huan Wang, Yingbo Zhou, Silvio Savarese, Caiming Xiong 发布。

1. **[Conditional DETR](https://huggingface.co/docs/transformers/model_doc/conditional_detr)** (来自 Microsoft Research Asia) 伴随论文 [Conditional DETR for Fast Training Convergence](https://arxiv.org/abs/2108.06152) 由 Depu Meng, Xiaokang Chen, Zejia Fan, Gang Zeng, Houqiang Li, Yuhui Yuan, Lei Sun, Jingdong Wang 发布。

1. **[ConvBERT](https://huggingface.co/docs/transformers/model_doc/convbert)** (来自 YituTech) 伴随论文 [ConvBERT: Improving BERT with Span-based Dynamic Convolution](https://arxiv.org/abs/2008.02496) 由 Zihang Jiang, Weihao Yu, Daquan Zhou, Yunpeng Chen, Jiashi Feng, Shuicheng Yan 发布。

diff --git a/README_zh-hant.md b/README_zh-hant.md

index e5764c6ce8f15..92ca90cecdd8e 100644

--- a/README_zh-hant.md

+++ b/README_zh-hant.md

@@ -265,6 +265,7 @@ conda install -c huggingface transformers

1. **[CamemBERT](https://huggingface.co/docs/transformers/model_doc/camembert)** (from Inria/Facebook/Sorbonne) released with the paper [CamemBERT: a Tasty French Language Model](https://arxiv.org/abs/1911.03894) by Louis Martin*, Benjamin Muller*, Pedro Javier Ortiz Suárez*, Yoann Dupont, Laurent Romary, Éric Villemonte de la Clergerie, Djamé Seddah and Benoît Sagot.

1. **[CANINE](https://huggingface.co/docs/transformers/model_doc/canine)** (from Google Research) released with the paper [CANINE: Pre-training an Efficient Tokenization-Free Encoder for Language Representation](https://arxiv.org/abs/2103.06874) by Jonathan H. Clark, Dan Garrette, Iulia Turc, John Wieting.

1. **[CLIP](https://huggingface.co/docs/transformers/model_doc/clip)** (from OpenAI) released with the paper [Learning Transferable Visual Models From Natural Language Supervision](https://arxiv.org/abs/2103.00020) by Alec Radford, Jong Wook Kim, Chris Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pamela Mishkin, Jack Clark, Gretchen Krueger, Ilya Sutskever.

+1. **[CLIPSeg](https://huggingface.co/docs/transformers/main/model_doc/clipseg)** (from University of Göttingen) released with the paper [Image Segmentation Using Text and Image Prompts](https://arxiv.org/abs/2112.10003) by Timo Lüddecke and Alexander Ecker.

1. **[CodeGen](https://huggingface.co/docs/transformers/model_doc/codegen)** (from Salesforce) released with the paper [A Conversational Paradigm for Program Synthesis](https://arxiv.org/abs/2203.13474) by Erik Nijkamp, Bo Pang, Hiroaki Hayashi, Lifu Tu, Huan Wang, Yingbo Zhou, Silvio Savarese, Caiming Xiong.

1. **[Conditional DETR](https://huggingface.co/docs/transformers/model_doc/conditional_detr)** (from Microsoft Research Asia) released with the paper [Conditional DETR for Fast Training Convergence](https://arxiv.org/abs/2108.06152) by Depu Meng, Xiaokang Chen, Zejia Fan, Gang Zeng, Houqiang Li, Yuhui Yuan, Lei Sun, Jingdong Wang.

1. **[ConvBERT](https://huggingface.co/docs/transformers/model_doc/convbert)** (from YituTech) released with the paper [ConvBERT: Improving BERT with Span-based Dynamic Convolution](https://arxiv.org/abs/2008.02496) by Zihang Jiang, Weihao Yu, Daquan Zhou, Yunpeng Chen, Jiashi Feng, Shuicheng Yan.

diff --git a/docs/source/en/_toctree.yml b/docs/source/en/_toctree.yml

index a6706cb774664..1cd287130db48 100644

--- a/docs/source/en/_toctree.yml

+++ b/docs/source/en/_toctree.yml

@@ -466,6 +466,8 @@

sections:

- local: model_doc/clip

title: CLIP

+ - local: model_doc/clipseg

+ title: CLIPSeg

- local: model_doc/data2vec

title: Data2Vec

- local: model_doc/donut

diff --git a/docs/source/en/index.mdx b/docs/source/en/index.mdx

index bcc832a250ded..5f446f21b5365 100644

--- a/docs/source/en/index.mdx

+++ b/docs/source/en/index.mdx

@@ -67,6 +67,7 @@ The documentation is organized into five sections:

1. **[CamemBERT](model_doc/camembert)** (from Inria/Facebook/Sorbonne) released with the paper [CamemBERT: a Tasty French Language Model](https://arxiv.org/abs/1911.03894) by Louis Martin*, Benjamin Muller*, Pedro Javier Ortiz Suárez*, Yoann Dupont, Laurent Romary, Éric Villemonte de la Clergerie, Djamé Seddah and Benoît Sagot.

1. **[CANINE](model_doc/canine)** (from Google Research) released with the paper [CANINE: Pre-training an Efficient Tokenization-Free Encoder for Language Representation](https://arxiv.org/abs/2103.06874) by Jonathan H. Clark, Dan Garrette, Iulia Turc, John Wieting.

1. **[CLIP](model_doc/clip)** (from OpenAI) released with the paper [Learning Transferable Visual Models From Natural Language Supervision](https://arxiv.org/abs/2103.00020) by Alec Radford, Jong Wook Kim, Chris Hallacy, Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry, Amanda Askell, Pamela Mishkin, Jack Clark, Gretchen Krueger, Ilya Sutskever.

+1. **[CLIPSeg](model_doc/clipseg)** (from University of Göttingen) released with the paper [Image Segmentation Using Text and Image Prompts](https://arxiv.org/abs/2112.10003) by Timo Lüddecke and Alexander Ecker.

1. **[CodeGen](model_doc/codegen)** (from Salesforce) released with the paper [A Conversational Paradigm for Program Synthesis](https://arxiv.org/abs/2203.13474) by Erik Nijkamp, Bo Pang, Hiroaki Hayashi, Lifu Tu, Huan Wang, Yingbo Zhou, Silvio Savarese, Caiming Xiong.

1. **[Conditional DETR](model_doc/conditional_detr)** (from Microsoft Research Asia) released with the paper [Conditional DETR for Fast Training Convergence](https://arxiv.org/abs/2108.06152) by Depu Meng, Xiaokang Chen, Zejia Fan, Gang Zeng, Houqiang Li, Yuhui Yuan, Lei Sun, Jingdong Wang.

1. **[ConvBERT](model_doc/convbert)** (from YituTech) released with the paper [ConvBERT: Improving BERT with Span-based Dynamic Convolution](https://arxiv.org/abs/2008.02496) by Zihang Jiang, Weihao Yu, Daquan Zhou, Yunpeng Chen, Jiashi Feng, Shuicheng Yan.

@@ -223,6 +224,7 @@ Flax), PyTorch, and/or TensorFlow.

| CamemBERT | ✅ | ✅ | ✅ | ✅ | ❌ |

| CANINE | ✅ | ❌ | ✅ | ❌ | ❌ |

| CLIP | ✅ | ✅ | ✅ | ✅ | ✅ |

+| CLIPSeg | ❌ | ❌ | ✅ | ❌ | ❌ |

| CodeGen | ✅ | ✅ | ✅ | ❌ | ❌ |

| Conditional DETR | ❌ | ❌ | ✅ | ❌ | ❌ |

| ConvBERT | ✅ | ✅ | ✅ | ✅ | ❌ |

diff --git a/docs/source/en/model_doc/clipseg.mdx b/docs/source/en/model_doc/clipseg.mdx

new file mode 100644

index 0000000000000..c72154883d63b

--- /dev/null

+++ b/docs/source/en/model_doc/clipseg.mdx

@@ -0,0 +1,93 @@

+

+

+# CLIPSeg

+

+## Overview

+

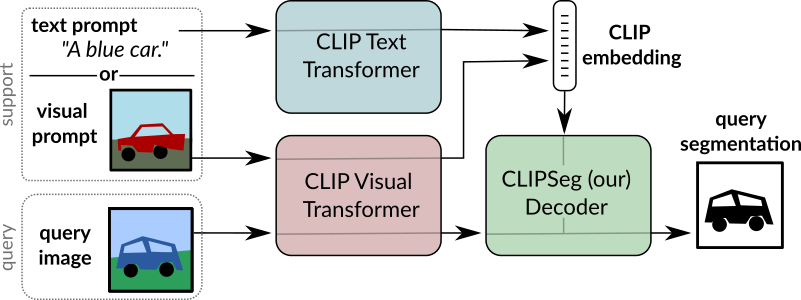

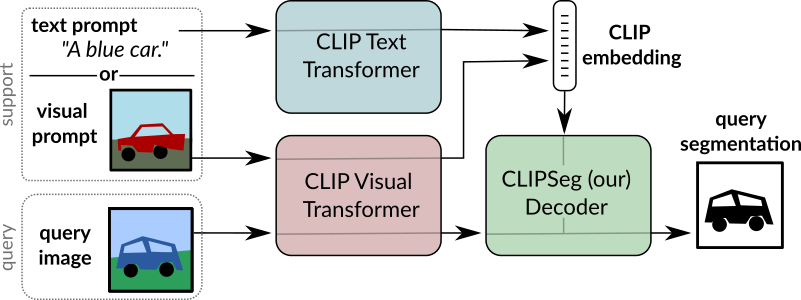

+The CLIPSeg model was proposed in [Image Segmentation Using Text and Image Prompts](https://arxiv.org/abs/2112.10003) by Timo Lüddecke

+and Alexander Ecker. CLIPSeg adds a minimal decoder on top of a frozen [CLIP](clip) model for zero- and one-shot image segmentation.

+

+The abstract from the paper is the following:

+

+*Image segmentation is usually addressed by training a

+model for a fixed set of object classes. Incorporating additional classes or more complex queries later is expensive

+as it requires re-training the model on a dataset that encompasses these expressions. Here we propose a system

+that can generate image segmentations based on arbitrary

+prompts at test time. A prompt can be either a text or an

+image. This approach enables us to create a unified model

+(trained once) for three common segmentation tasks, which

+come with distinct challenges: referring expression segmentation, zero-shot segmentation and one-shot segmentation.

+We build upon the CLIP model as a backbone which we extend with a transformer-based decoder that enables dense

+prediction. After training on an extended version of the

+PhraseCut dataset, our system generates a binary segmentation map for an image based on a free-text prompt or on

+an additional image expressing the query. We analyze different variants of the latter image-based prompts in detail.

+This novel hybrid input allows for dynamic adaptation not

+only to the three segmentation tasks mentioned above, but

+to any binary segmentation task where a text or image query

+can be formulated. Finally, we find our system to adapt well

+to generalized queries involving affordances or properties*

+

+Tips:

+

+- [`CLIPSegForImageSegmentation`] adds a decoder on top of [`CLIPSegModel`]. The latter is identical to [`CLIPModel`].

+- [`CLIPSegForImageSegmentation`] can generate image segmentations based on arbitrary prompts at test time. A prompt can be either a text

+(provided to the model as `input_ids`) or an image (provided to the model as `conditional_pixel_values`). One can also provide custom

+conditional embeddings (provided to the model as `conditional_embeddings`).

+

+ +

+ CLIPSeg overview. Taken from the original paper.

+

+This model was contributed by [nielsr](https://huggingface.co/nielsr).

+The original code can be found [here](https://github.com/timojl/clipseg).

+

+

+## CLIPSegConfig

+

+[[autodoc]] CLIPSegConfig

+ - from_text_vision_configs

+

+## CLIPSegTextConfig

+

+[[autodoc]] CLIPSegTextConfig

+

+## CLIPSegVisionConfig

+

+[[autodoc]] CLIPSegVisionConfig

+

+## CLIPSegProcessor

+

+[[autodoc]] CLIPSegProcessor

+

+## CLIPSegModel

+

+[[autodoc]] CLIPSegModel

+ - forward

+ - get_text_features

+ - get_image_features

+

+## CLIPSegTextModel

+

+[[autodoc]] CLIPSegTextModel

+ - forward

+

+## CLIPSegVisionModel

+

+[[autodoc]] CLIPSegVisionModel

+ - forward

+

+## CLIPSegForImageSegmentation

+

+[[autodoc]] CLIPSegForImageSegmentation

+ - forward

\ No newline at end of file

diff --git a/src/transformers/__init__.py b/src/transformers/__init__.py

index a3ce3fd1eb2ee..b377395a9046c 100755

--- a/src/transformers/__init__.py

+++ b/src/transformers/__init__.py

@@ -171,6 +171,13 @@

"CLIPTokenizer",

"CLIPVisionConfig",

],

+ "models.clipseg": [

+ "CLIPSEG_PRETRAINED_CONFIG_ARCHIVE_MAP",

+ "CLIPSegConfig",

+ "CLIPSegProcessor",

+ "CLIPSegTextConfig",

+ "CLIPSegVisionConfig",

+ ],

"models.codegen": ["CODEGEN_PRETRAINED_CONFIG_ARCHIVE_MAP", "CodeGenConfig", "CodeGenTokenizer"],

"models.conditional_detr": ["CONDITIONAL_DETR_PRETRAINED_CONFIG_ARCHIVE_MAP", "ConditionalDetrConfig"],

"models.convbert": ["CONVBERT_PRETRAINED_CONFIG_ARCHIVE_MAP", "ConvBertConfig", "ConvBertTokenizer"],

@@ -1074,6 +1081,16 @@

"CLIPVisionModel",

]

)

+ _import_structure["models.clipseg"].extend(

+ [

+ "CLIPSEG_PRETRAINED_MODEL_ARCHIVE_LIST",

+ "CLIPSegModel",

+ "CLIPSegPreTrainedModel",

+ "CLIPSegTextModel",

+ "CLIPSegVisionModel",

+ "CLIPSegForImageSegmentation",

+ ]

+ )

_import_structure["models.x_clip"].extend(

[

"XCLIP_PRETRAINED_MODEL_ARCHIVE_LIST",

@@ -3225,6 +3242,13 @@

CLIPTokenizer,

CLIPVisionConfig,

)

+ from .models.clipseg import (

+ CLIPSEG_PRETRAINED_CONFIG_ARCHIVE_MAP,

+ CLIPSegConfig,

+ CLIPSegProcessor,

+ CLIPSegTextConfig,

+ CLIPSegVisionConfig,

+ )

from .models.codegen import CODEGEN_PRETRAINED_CONFIG_ARCHIVE_MAP, CodeGenConfig, CodeGenTokenizer

from .models.conditional_detr import CONDITIONAL_DETR_PRETRAINED_CONFIG_ARCHIVE_MAP, ConditionalDetrConfig

from .models.convbert import CONVBERT_PRETRAINED_CONFIG_ARCHIVE_MAP, ConvBertConfig, ConvBertTokenizer

@@ -3993,6 +4017,14 @@

CLIPTextModel,

CLIPVisionModel,

)

+ from .models.clipseg import (

+ CLIPSEG_PRETRAINED_MODEL_ARCHIVE_LIST,

+ CLIPSegForImageSegmentation,

+ CLIPSegModel,

+ CLIPSegPreTrainedModel,

+ CLIPSegTextModel,

+ CLIPSegVisionModel,

+ )

from .models.codegen import (

CODEGEN_PRETRAINED_MODEL_ARCHIVE_LIST,

CodeGenForCausalLM,

diff --git a/src/transformers/models/__init__.py b/src/transformers/models/__init__.py

index 86a775a1eb2b8..03153725125cc 100644

--- a/src/transformers/models/__init__.py

+++ b/src/transformers/models/__init__.py

@@ -37,6 +37,7 @@

camembert,

canine,

clip,

+ clipseg,

codegen,

conditional_detr,

convbert,

diff --git a/src/transformers/models/auto/configuration_auto.py b/src/transformers/models/auto/configuration_auto.py

index 68f29f89ae50e..d8b59f123f676 100644

--- a/src/transformers/models/auto/configuration_auto.py

+++ b/src/transformers/models/auto/configuration_auto.py

@@ -42,6 +42,7 @@

("camembert", "CamembertConfig"),

("canine", "CanineConfig"),

("clip", "CLIPConfig"),

+ ("clipseg", "CLIPSegConfig"),

("codegen", "CodeGenConfig"),

("conditional_detr", "ConditionalDetrConfig"),

("convbert", "ConvBertConfig"),

@@ -182,6 +183,7 @@

("camembert", "CAMEMBERT_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("canine", "CANINE_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("clip", "CLIP_PRETRAINED_CONFIG_ARCHIVE_MAP"),

+ ("clipseg", "CLIPSEG_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("codegen", "CODEGEN_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("conditional_detr", "CONDITIONAL_DETR_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("convbert", "CONVBERT_PRETRAINED_CONFIG_ARCHIVE_MAP"),

@@ -315,6 +317,7 @@

("camembert", "CamemBERT"),

("canine", "CANINE"),

("clip", "CLIP"),

+ ("clipseg", "CLIPSeg"),

("codegen", "CodeGen"),

("conditional_detr", "Conditional DETR"),

("convbert", "ConvBERT"),

diff --git a/src/transformers/models/auto/feature_extraction_auto.py b/src/transformers/models/auto/feature_extraction_auto.py

index 76d38f95ab151..bc30cc21b60d0 100644

--- a/src/transformers/models/auto/feature_extraction_auto.py

+++ b/src/transformers/models/auto/feature_extraction_auto.py

@@ -39,6 +39,7 @@

[

("beit", "BeitFeatureExtractor"),

("clip", "CLIPFeatureExtractor"),

+ ("clipseg", "ViTFeatureExtractor"),

("conditional_detr", "ConditionalDetrFeatureExtractor"),

("convnext", "ConvNextFeatureExtractor"),

("cvt", "ConvNextFeatureExtractor"),

diff --git a/src/transformers/models/auto/modeling_auto.py b/src/transformers/models/auto/modeling_auto.py

index 3da1dc1790572..7b6e701175859 100644

--- a/src/transformers/models/auto/modeling_auto.py

+++ b/src/transformers/models/auto/modeling_auto.py

@@ -41,6 +41,7 @@

("camembert", "CamembertModel"),

("canine", "CanineModel"),

("clip", "CLIPModel"),

+ ("clipseg", "CLIPSegModel"),

("codegen", "CodeGenModel"),

("conditional_detr", "ConditionalDetrModel"),

("convbert", "ConvBertModel"),

@@ -813,6 +814,7 @@

[

# Model for Zero Shot Image Classification mapping

("clip", "CLIPModel"),

+ ("clipseg", "CLIPSegModel"),

]

)

diff --git a/src/transformers/models/auto/processing_auto.py b/src/transformers/models/auto/processing_auto.py

index 3e31a14d25817..f7bb87e25e2d7 100644

--- a/src/transformers/models/auto/processing_auto.py

+++ b/src/transformers/models/auto/processing_auto.py

@@ -40,6 +40,7 @@

PROCESSOR_MAPPING_NAMES = OrderedDict(

[

("clip", "CLIPProcessor"),

+ ("clipseg", "CLIPSegProcessor"),

("flava", "FlavaProcessor"),

("groupvit", "CLIPProcessor"),

("layoutlmv2", "LayoutLMv2Processor"),

diff --git a/src/transformers/models/auto/tokenization_auto.py b/src/transformers/models/auto/tokenization_auto.py

index 46e57ac58bd4c..d5374e6f42e00 100644

--- a/src/transformers/models/auto/tokenization_auto.py

+++ b/src/transformers/models/auto/tokenization_auto.py

@@ -93,6 +93,13 @@

"CLIPTokenizerFast" if is_tokenizers_available() else None,

),

),

+ (

+ "clipseg",

+ (

+ "CLIPTokenizer",

+ "CLIPTokenizerFast" if is_tokenizers_available() else None,

+ ),

+ ),

("codegen", ("CodeGenTokenizer", "CodeGenTokenizerFast" if is_tokenizers_available() else None)),

("convbert", ("ConvBertTokenizer", "ConvBertTokenizerFast" if is_tokenizers_available() else None)),

(

diff --git a/src/transformers/models/clipseg/__init__.py b/src/transformers/models/clipseg/__init__.py

new file mode 100644

index 0000000000000..f6b09b9af9757

--- /dev/null

+++ b/src/transformers/models/clipseg/__init__.py

@@ -0,0 +1,75 @@

+# flake8: noqa

+# There's no way to ignore "F401 '...' imported but unused" warnings in this

+# module, but to preserve other warnings. So, don't check this module at all.

+

+# Copyright 2022 The HuggingFace Team. All rights reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+from typing import TYPE_CHECKING

+

+from ...utils import OptionalDependencyNotAvailable, _LazyModule, is_torch_available

+

+

+_import_structure = {

+ "configuration_clipseg": [

+ "CLIPSEG_PRETRAINED_CONFIG_ARCHIVE_MAP",

+ "CLIPSegConfig",

+ "CLIPSegTextConfig",

+ "CLIPSegVisionConfig",

+ ],

+ "processing_clipseg": ["CLIPSegProcessor"],

+}

+

+try:

+ if not is_torch_available():

+ raise OptionalDependencyNotAvailable()

+except OptionalDependencyNotAvailable:

+ pass

+else:

+ _import_structure["modeling_clipseg"] = [

+ "CLIPSEG_PRETRAINED_MODEL_ARCHIVE_LIST",

+ "CLIPSegModel",

+ "CLIPSegPreTrainedModel",

+ "CLIPSegTextModel",

+ "CLIPSegVisionModel",

+ "CLIPSegForImageSegmentation",

+ ]

+

+if TYPE_CHECKING:

+ from .configuration_clipseg import (

+ CLIPSEG_PRETRAINED_CONFIG_ARCHIVE_MAP,

+ CLIPSegConfig,

+ CLIPSegTextConfig,

+ CLIPSegVisionConfig,

+ )

+ from .processing_clipseg import CLIPSegProcessor

+

+ try:

+ if not is_torch_available():

+ raise OptionalDependencyNotAvailable()

+ except OptionalDependencyNotAvailable:

+ pass

+ else:

+ from .modeling_clipseg import (

+ CLIPSEG_PRETRAINED_MODEL_ARCHIVE_LIST,

+ CLIPSegForImageSegmentation,

+ CLIPSegModel,

+ CLIPSegPreTrainedModel,

+ CLIPSegTextModel,

+ CLIPSegVisionModel,

+ )

+

+else:

+ import sys

+

+ sys.modules[__name__] = _LazyModule(__name__, globals()["__file__"], _import_structure, module_spec=__spec__)

diff --git a/src/transformers/models/clipseg/configuration_clipseg.py b/src/transformers/models/clipseg/configuration_clipseg.py

new file mode 100644

index 0000000000000..1fe27b0d0b0f0

--- /dev/null

+++ b/src/transformers/models/clipseg/configuration_clipseg.py

@@ -0,0 +1,383 @@

+# coding=utf-8

+# Copyright 2022 The HuggingFace Inc. team. All rights reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+""" CLIPSeg model configuration"""

+

+import copy

+import os

+from typing import Union

+

+from ...configuration_utils import PretrainedConfig

+from ...utils import logging

+

+

+logger = logging.get_logger(__name__)

+

+CLIPSEG_PRETRAINED_CONFIG_ARCHIVE_MAP = {

+ "CIDAS/clipseg-rd64": "https://huggingface.co/CIDAS/clipseg-rd64/resolve/main/config.json",

+}

+

+

+class CLIPSegTextConfig(PretrainedConfig):

+ r"""

+ This is the configuration class to store the configuration of a [`CLIPSegModel`]. It is used to instantiate an

+ CLIPSeg model according to the specified arguments, defining the model architecture. Instantiating a configuration

+ with the defaults will yield a similar configuration to that of the CLIPSeg

+ [CIDAS/clipseg-rd64](https://huggingface.co/CIDAS/clipseg-rd64) architecture.

+

+ Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

+ documentation from [`PretrainedConfig`] for more information.

+

+ Args:

+ vocab_size (`int`, *optional*, defaults to 49408):

+ Vocabulary size of the CLIPSeg text model. Defines the number of different tokens that can be represented

+ by the `inputs_ids` passed when calling [`CLIPSegModel`].

+ hidden_size (`int`, *optional*, defaults to 512):

+ Dimensionality of the encoder layers and the pooler layer.

+ intermediate_size (`int`, *optional*, defaults to 2048):

+ Dimensionality of the "intermediate" (i.e., feed-forward) layer in the Transformer encoder.

+ num_hidden_layers (`int`, *optional*, defaults to 12):

+ Number of hidden layers in the Transformer encoder.

+ num_attention_heads (`int`, *optional*, defaults to 8):

+ Number of attention heads for each attention layer in the Transformer encoder.

+ max_position_embeddings (`int`, *optional*, defaults to 77):

+ The maximum sequence length that this model might ever be used with. Typically set this to something large

+ just in case (e.g., 512 or 1024 or 2048).

+ hidden_act (`str` or `function`, *optional*, defaults to `"quick_gelu"`):

+ The non-linear activation function (function or string) in the encoder and pooler. If string, `"gelu"`,

+ `"relu"`, `"selu"` and `"gelu_new"` ``"quick_gelu"` are supported. layer_norm_eps (`float`, *optional*,

+ defaults to 1e-5): The epsilon used by the layer normalization layers.

+ attention_dropout (`float`, *optional*, defaults to 0.0):

+ The dropout ratio for the attention probabilities.

+ dropout (`float`, *optional*, defaults to 0.0):

+ The dropout probabilitiy for all fully connected layers in the embeddings, encoder, and pooler.

+ initializer_range (`float`, *optional*, defaults to 0.02):

+ The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

+ initializer_factor (`float``, *optional*, defaults to 1):

+ A factor for initializing all weight matrices (should be kept to 1, used internally for initialization

+ testing).

+

+ Example:

+

+ ```python

+ >>> from transformers import CLIPSegTextConfig, CLIPSegTextModel

+

+ >>> # Initializing a CLIPSegTextConfig with CIDAS/clipseg-rd64 style configuration

+ >>> configuration = CLIPSegTextConfig()

+

+ >>> # Initializing a CLIPSegTextModel (with random weights) from the CIDAS/clipseg-rd64 style configuration

+ >>> model = CLIPSegTextModel(configuration)

+

+ >>> # Accessing the model configuration

+ >>> configuration = model.config

+ ```"""

+ model_type = "clipseg_text_model"

+

+ def __init__(

+ self,

+ vocab_size=49408,

+ hidden_size=512,

+ intermediate_size=2048,

+ num_hidden_layers=12,

+ num_attention_heads=8,

+ max_position_embeddings=77,

+ hidden_act="quick_gelu",

+ layer_norm_eps=0.00001,

+ dropout=0.0,

+ attention_dropout=0.0,

+ initializer_range=0.02,

+ initializer_factor=1.0,

+ pad_token_id=1,

+ bos_token_id=0,

+ eos_token_id=2,

+ **kwargs

+ ):

+ super().__init__(pad_token_id=pad_token_id, bos_token_id=bos_token_id, eos_token_id=eos_token_id, **kwargs)

+

+ self.vocab_size = vocab_size

+ self.hidden_size = hidden_size

+ self.intermediate_size = intermediate_size

+ self.dropout = dropout

+ self.num_hidden_layers = num_hidden_layers

+ self.num_attention_heads = num_attention_heads

+ self.max_position_embeddings = max_position_embeddings

+ self.layer_norm_eps = layer_norm_eps

+ self.hidden_act = hidden_act

+ self.initializer_range = initializer_range

+ self.initializer_factor = initializer_factor

+ self.attention_dropout = attention_dropout

+

+ @classmethod

+ def from_pretrained(cls, pretrained_model_name_or_path: Union[str, os.PathLike], **kwargs) -> "PretrainedConfig":

+

+ config_dict, kwargs = cls.get_config_dict(pretrained_model_name_or_path, **kwargs)

+

+ # get the text config dict if we are loading from CLIPSegConfig

+ if config_dict.get("model_type") == "clipseg":

+ config_dict = config_dict["text_config"]

+

+ if "model_type" in config_dict and hasattr(cls, "model_type") and config_dict["model_type"] != cls.model_type:

+ logger.warning(

+ f"You are using a model of type {config_dict['model_type']} to instantiate a model of type "

+ f"{cls.model_type}. This is not supported for all configurations of models and can yield errors."

+ )

+

+ return cls.from_dict(config_dict, **kwargs)

+

+

+class CLIPSegVisionConfig(PretrainedConfig):

+ r"""

+ This is the configuration class to store the configuration of a [`CLIPSegModel`]. It is used to instantiate an

+ CLIPSeg model according to the specified arguments, defining the model architecture. Instantiating a configuration

+ with the defaults will yield a similar configuration to that of the CLIPSeg

+ [CIDAS/clipseg-rd64](https://huggingface.co/CIDAS/clipseg-rd64) architecture.

+

+ Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

+ documentation from [`PretrainedConfig`] for more information.

+

+ Args:

+ hidden_size (`int`, *optional*, defaults to 768):

+ Dimensionality of the encoder layers and the pooler layer.

+ intermediate_size (`int`, *optional*, defaults to 3072):

+ Dimensionality of the "intermediate" (i.e., feed-forward) layer in the Transformer encoder.

+ num_hidden_layers (`int`, *optional*, defaults to 12):

+ Number of hidden layers in the Transformer encoder.

+ num_attention_heads (`int`, *optional*, defaults to 12):

+ Number of attention heads for each attention layer in the Transformer encoder.

+ image_size (`int`, *optional*, defaults to 224):

+ The size (resolution) of each image.

+ patch_size (`int`, *optional*, defaults to 32):

+ The size (resolution) of each patch.

+ hidden_act (`str` or `function`, *optional*, defaults to `"quick_gelu"`):

+ The non-linear activation function (function or string) in the encoder and pooler. If string, `"gelu"`,

+ `"relu"`, `"selu"` and `"gelu_new"` ``"quick_gelu"` are supported. layer_norm_eps (`float`, *optional*,

+ defaults to 1e-5): The epsilon used by the layer normalization layers.

+ dropout (`float`, *optional*, defaults to 0.0):

+ The dropout probabilitiy for all fully connected layers in the embeddings, encoder, and pooler.

+ attention_dropout (`float`, *optional*, defaults to 0.0):

+ The dropout ratio for the attention probabilities.

+ initializer_range (`float`, *optional*, defaults to 0.02):

+ The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

+ initializer_factor (`float``, *optional*, defaults to 1):

+ A factor for initializing all weight matrices (should be kept to 1, used internally for initialization

+ testing).

+

+ Example:

+

+ ```python

+ >>> from transformers import CLIPSegVisionConfig, CLIPSegVisionModel

+

+ >>> # Initializing a CLIPSegVisionConfig with CIDAS/clipseg-rd64 style configuration

+ >>> configuration = CLIPSegVisionConfig()

+

+ >>> # Initializing a CLIPSegVisionModel (with random weights) from the CIDAS/clipseg-rd64 style configuration

+ >>> model = CLIPSegVisionModel(configuration)

+

+ >>> # Accessing the model configuration

+ >>> configuration = model.config

+ ```"""

+

+ model_type = "clipseg_vision_model"

+

+ def __init__(

+ self,

+ hidden_size=768,

+ intermediate_size=3072,

+ num_hidden_layers=12,

+ num_attention_heads=12,

+ num_channels=3,

+ image_size=224,

+ patch_size=32,

+ hidden_act="quick_gelu",

+ layer_norm_eps=0.00001,

+ dropout=0.0,

+ attention_dropout=0.0,

+ initializer_range=0.02,

+ initializer_factor=1.0,

+ **kwargs

+ ):

+ super().__init__(**kwargs)

+

+ self.hidden_size = hidden_size

+ self.intermediate_size = intermediate_size

+ self.dropout = dropout

+ self.num_hidden_layers = num_hidden_layers

+ self.num_attention_heads = num_attention_heads

+ self.num_channels = num_channels

+ self.patch_size = patch_size

+ self.image_size = image_size

+ self.initializer_range = initializer_range

+ self.initializer_factor = initializer_factor

+ self.attention_dropout = attention_dropout

+ self.layer_norm_eps = layer_norm_eps

+ self.hidden_act = hidden_act

+

+ @classmethod

+ def from_pretrained(cls, pretrained_model_name_or_path: Union[str, os.PathLike], **kwargs) -> "PretrainedConfig":

+

+ config_dict, kwargs = cls.get_config_dict(pretrained_model_name_or_path, **kwargs)

+

+ # get the vision config dict if we are loading from CLIPSegConfig

+ if config_dict.get("model_type") == "clipseg":

+ config_dict = config_dict["vision_config"]

+

+ if "model_type" in config_dict and hasattr(cls, "model_type") and config_dict["model_type"] != cls.model_type:

+ logger.warning(

+ f"You are using a model of type {config_dict['model_type']} to instantiate a model of type "

+ f"{cls.model_type}. This is not supported for all configurations of models and can yield errors."

+ )

+

+ return cls.from_dict(config_dict, **kwargs)

+

+

+class CLIPSegConfig(PretrainedConfig):

+ r"""

+ [`CLIPSegConfig`] is the configuration class to store the configuration of a [`CLIPSegModel`]. It is used to

+ instantiate a CLIPSeg model according to the specified arguments, defining the text model and vision model configs.

+ Instantiating a configuration with the defaults will yield a similar configuration to that of the CLIPSeg

+ [CIDAS/clipseg-rd64](https://huggingface.co/CIDAS/clipseg-rd64) architecture.

+

+ Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

+ documentation from [`PretrainedConfig`] for more information.

+

+ Args:

+ text_config (`dict`, *optional*):

+ Dictionary of configuration options used to initialize [`CLIPSegTextConfig`].

+ vision_config (`dict`, *optional*):

+ Dictionary of configuration options used to initialize [`CLIPSegVisionConfig`].

+ projection_dim (`int`, *optional*, defaults to 512):

+ Dimensionality of text and vision projection layers.

+ logit_scale_init_value (`float`, *optional*, defaults to 2.6592):

+ The inital value of the *logit_scale* paramter. Default is used as per the original CLIPSeg implementation.

+ extract_layers (`List[int]`, *optional*, defaults to [3, 6, 9]):

+ Layers to extract when forwarding the query image through the frozen visual backbone of CLIP.

+ reduce_dim (`int`, *optional*, defaults to 64):

+ Dimensionality to reduce the CLIP vision embedding.

+ decoder_num_attention_heads (`int`, *optional*, defaults to 4):

+ Number of attention heads in the decoder of CLIPSeg.

+ decoder_attention_dropout (`float`, *optional*, defaults to 0.0):

+ The dropout ratio for the attention probabilities.

+ decoder_hidden_act (`str` or `function`, *optional*, defaults to `"quick_gelu"`):

+ The non-linear activation function (function or string) in the encoder and pooler. If string, `"gelu"`,

+ `"relu"`, `"selu"` and `"gelu_new"` ``"quick_gelu"` are supported. layer_norm_eps (`float`, *optional*,

+ defaults to 1e-5): The epsilon used by the layer normalization layers.

+ decoder_intermediate_size (`int`, *optional*, defaults to 2048):

+ Dimensionality of the "intermediate" (i.e., feed-forward) layers in the Transformer decoder.

+ conditional_layer (`int`, *optional*, defaults to 0):

+ The layer to use of the Transformer encoder whose activations will be combined with the condition

+ embeddings using FiLM (Feature-wise Linear Modulation). If 0, the last layer is used.

+ use_complex_transposed_convolution (`bool`, *optional*, defaults to `False`):

+ Whether to use a more complex transposed convolution in the decoder, enabling more fine-grained

+ segmentation.

+ kwargs (*optional*):

+ Dictionary of keyword arguments.

+

+ Example:

+

+ ```python

+ >>> from transformers import CLIPSegConfig, CLIPSegModel

+

+ >>> # Initializing a CLIPSegConfig with CIDAS/clipseg-rd64 style configuration

+ >>> configuration = CLIPSegConfig()

+

+ >>> # Initializing a CLIPSegModel (with random weights) from the CIDAS/clipseg-rd64 style configuration

+ >>> model = CLIPSegModel(configuration)

+

+ >>> # Accessing the model configuration

+ >>> configuration = model.config

+

+ >>> # We can also initialize a CLIPSegConfig from a CLIPSegTextConfig and a CLIPSegVisionConfig

+

+ >>> # Initializing a CLIPSegText and CLIPSegVision configuration

+ >>> config_text = CLIPSegTextConfig()

+ >>> config_vision = CLIPSegVisionConfig()

+

+ >>> config = CLIPSegConfig.from_text_vision_configs(config_text, config_vision)

+ ```"""

+

+ model_type = "clipseg"

+ is_composition = True

+

+ def __init__(

+ self,

+ text_config=None,

+ vision_config=None,

+ projection_dim=512,

+ logit_scale_init_value=2.6592,

+ extract_layers=[3, 6, 9],

+ reduce_dim=64,

+ decoder_num_attention_heads=4,

+ decoder_attention_dropout=0.0,

+ decoder_hidden_act="quick_gelu",

+ decoder_intermediate_size=2048,

+ conditional_layer=0,

+ use_complex_transposed_convolution=False,

+ **kwargs

+ ):

+ super().__init__(**kwargs)

+

+ text_config_dict = kwargs.pop("text_config_dict", None)

+ vision_config_dict = kwargs.pop("vision_config_dict", None)

+ if text_config_dict is not None:

+ text_config = text_config_dict

+ if vision_config_dict is not None:

+ vision_config = vision_config_dict

+

+ if text_config is None:

+ text_config = {}

+ logger.info("text_config is None. Initializing the CLIPSegTextConfig with default values.")

+

+ if vision_config is None:

+ vision_config = {}

+ logger.info("vision_config is None. initializing the CLIPSegVisionConfig with default values.")

+

+ self.text_config = CLIPSegTextConfig(**text_config)

+ self.vision_config = CLIPSegVisionConfig(**vision_config)

+

+ self.projection_dim = projection_dim

+ self.logit_scale_init_value = logit_scale_init_value

+ self.extract_layers = extract_layers

+ self.reduce_dim = reduce_dim

+ self.decoder_num_attention_heads = decoder_num_attention_heads

+ self.decoder_attention_dropout = decoder_attention_dropout

+ self.decoder_hidden_act = decoder_hidden_act

+ self.decoder_intermediate_size = decoder_intermediate_size

+ self.conditional_layer = conditional_layer

+ self.initializer_factor = 1.0

+ self.use_complex_transposed_convolution = use_complex_transposed_convolution

+

+ @classmethod

+ def from_text_vision_configs(cls, text_config: CLIPSegTextConfig, vision_config: CLIPSegVisionConfig, **kwargs):

+ r"""

+ Instantiate a [`CLIPSegConfig`] (or a derived class) from clipseg text model configuration and clipseg vision

+ model configuration.

+

+ Returns:

+ [`CLIPSegConfig`]: An instance of a configuration object

+ """

+

+ return cls(text_config=text_config.to_dict(), vision_config=vision_config.to_dict(), **kwargs)

+

+ def to_dict(self):

+ """

+ Serializes this instance to a Python dictionary. Override the default [`~PretrainedConfig.to_dict`].

+

+ Returns:

+ `Dict[str, any]`: Dictionary of all the attributes that make up this configuration instance,

+ """

+ output = copy.deepcopy(self.__dict__)

+ output["text_config"] = self.text_config.to_dict()

+ output["vision_config"] = self.vision_config.to_dict()

+ output["model_type"] = self.__class__.model_type

+ return output

diff --git a/src/transformers/models/clipseg/convert_clipseg_original_pytorch_to_hf.py b/src/transformers/models/clipseg/convert_clipseg_original_pytorch_to_hf.py

new file mode 100644

index 0000000000000..778dbca299678

--- /dev/null

+++ b/src/transformers/models/clipseg/convert_clipseg_original_pytorch_to_hf.py

@@ -0,0 +1,264 @@

+# coding=utf-8

+# Copyright 2022 The HuggingFace Inc. team. All rights reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+"""Convert CLIPSeg checkpoints from the original repository. URL: https://github.com/timojl/clipseg."""

+

+import argparse

+

+import torch

+from PIL import Image

+

+import requests

+from transformers import (

+ CLIPSegConfig,

+ CLIPSegForImageSegmentation,

+ CLIPSegProcessor,

+ CLIPSegTextConfig,

+ CLIPSegVisionConfig,

+ CLIPTokenizer,

+ ViTFeatureExtractor,

+)

+

+

+def get_clipseg_config(model_name):

+ text_config = CLIPSegTextConfig()

+ vision_config = CLIPSegVisionConfig(patch_size=16)

+

+ use_complex_transposed_convolution = True if "refined" in model_name else False

+ reduce_dim = 16 if "rd16" in model_name else 64

+

+ config = CLIPSegConfig.from_text_vision_configs(

+ text_config,

+ vision_config,

+ use_complex_transposed_convolution=use_complex_transposed_convolution,

+ reduce_dim=reduce_dim,

+ )

+ return config

+

+

+def rename_key(name):

+ # update prefixes

+ if "clip_model" in name:

+ name = name.replace("clip_model", "clip")

+ if "transformer" in name:

+ if "visual" in name:

+ name = name.replace("visual.transformer", "vision_model")

+ else:

+ name = name.replace("transformer", "text_model")

+ if "resblocks" in name:

+ name = name.replace("resblocks", "encoder.layers")

+ if "ln_1" in name:

+ name = name.replace("ln_1", "layer_norm1")

+ if "ln_2" in name:

+ name = name.replace("ln_2", "layer_norm2")

+ if "c_fc" in name:

+ name = name.replace("c_fc", "fc1")

+ if "c_proj" in name:

+ name = name.replace("c_proj", "fc2")

+ if "attn" in name and "self" not in name:

+ name = name.replace("attn", "self_attn")

+ # text encoder

+ if "token_embedding" in name:

+ name = name.replace("token_embedding", "text_model.embeddings.token_embedding")

+ if "positional_embedding" in name and "visual" not in name:

+ name = name.replace("positional_embedding", "text_model.embeddings.position_embedding.weight")

+ if "ln_final" in name:

+ name = name.replace("ln_final", "text_model.final_layer_norm")

+ # vision encoder

+ if "visual.class_embedding" in name:

+ name = name.replace("visual.class_embedding", "vision_model.embeddings.class_embedding")

+ if "visual.conv1" in name:

+ name = name.replace("visual.conv1", "vision_model.embeddings.patch_embedding")

+ if "visual.positional_embedding" in name:

+ name = name.replace("visual.positional_embedding", "vision_model.embeddings.position_embedding.weight")

+ if "visual.ln_pre" in name:

+ name = name.replace("visual.ln_pre", "vision_model.pre_layrnorm")

+ if "visual.ln_post" in name:

+ name = name.replace("visual.ln_post", "vision_model.post_layernorm")

+ # projection layers

+ if "visual.proj" in name:

+ name = name.replace("visual.proj", "visual_projection.weight")

+ if "text_projection" in name:

+ name = name.replace("text_projection", "text_projection.weight")

+ # decoder

+ if "trans_conv" in name:

+ name = name.replace("trans_conv", "transposed_convolution")

+ if "film_mul" in name or "film_add" in name or "reduce" in name or "transposed_convolution" in name:

+ name = "decoder." + name

+ if "blocks" in name:

+ name = name.replace("blocks", "decoder.layers")

+ if "linear1" in name:

+ name = name.replace("linear1", "mlp.fc1")

+ if "linear2" in name:

+ name = name.replace("linear2", "mlp.fc2")

+ if "norm1" in name and "layer_" not in name:

+ name = name.replace("norm1", "layer_norm1")

+ if "norm2" in name and "layer_" not in name:

+ name = name.replace("norm2", "layer_norm2")

+

+ return name

+

+

+def convert_state_dict(orig_state_dict, config):

+ for key in orig_state_dict.copy().keys():

+ val = orig_state_dict.pop(key)

+

+ if key.startswith("clip_model") and "attn.in_proj" in key:

+ key_split = key.split(".")

+ if "visual" in key:

+ layer_num = int(key_split[4])

+ dim = config.vision_config.hidden_size

+ prefix = "vision_model"

+ else:

+ layer_num = int(key_split[3])

+ dim = config.text_config.hidden_size

+ prefix = "text_model"

+

+ if "weight" in key:

+ orig_state_dict[f"clip.{prefix}.encoder.layers.{layer_num}.self_attn.q_proj.weight"] = val[:dim, :]

+ orig_state_dict[f"clip.{prefix}.encoder.layers.{layer_num}.self_attn.k_proj.weight"] = val[

+ dim : dim * 2, :

+ ]

+ orig_state_dict[f"clip.{prefix}.encoder.layers.{layer_num}.self_attn.v_proj.weight"] = val[-dim:, :]

+ else:

+ orig_state_dict[f"clip.{prefix}.encoder.layers.{layer_num}.self_attn.q_proj.bias"] = val[:dim]

+ orig_state_dict[f"clip.{prefix}.encoder.layers.{layer_num}.self_attn.k_proj.bias"] = val[dim : dim * 2]

+ orig_state_dict[f"clip.{prefix}.encoder.layers.{layer_num}.self_attn.v_proj.bias"] = val[-dim:]

+ elif "self_attn" in key and "out_proj" not in key:

+ key_split = key.split(".")

+ layer_num = int(key_split[1])

+ dim = config.reduce_dim

+ if "weight" in key:

+ orig_state_dict[f"decoder.layers.{layer_num}.self_attn.q_proj.weight"] = val[:dim, :]

+ orig_state_dict[f"decoder.layers.{layer_num}.self_attn.k_proj.weight"] = val[dim : dim * 2, :]

+ orig_state_dict[f"decoder.layers.{layer_num}.self_attn.v_proj.weight"] = val[-dim:, :]

+ else:

+ orig_state_dict[f"decoder.layers.{layer_num}.self_attn.q_proj.bias"] = val[:dim]

+ orig_state_dict[f"decoder.layers.{layer_num}.self_attn.k_proj.bias"] = val[dim : dim * 2]

+ orig_state_dict[f"decoder.layers.{layer_num}.self_attn.v_proj.bias"] = val[-dim:]

+ else:

+ new_name = rename_key(key)

+ if "visual_projection" in new_name or "text_projection" in new_name:

+ val = val.T

+ orig_state_dict[new_name] = val

+

+ return orig_state_dict

+

+

+# We will verify our results on an image of cute cats

+def prepare_img():

+ url = "http://images.cocodataset.org/val2017/000000039769.jpg"

+ image = Image.open(requests.get(url, stream=True).raw)

+ return image

+

+

+def convert_clipseg_checkpoint(model_name, checkpoint_path, pytorch_dump_folder_path, push_to_hub):

+ config = get_clipseg_config(model_name)

+ model = CLIPSegForImageSegmentation(config)

+ model.eval()

+

+ state_dict = torch.load(checkpoint_path, map_location="cpu")

+

+ # remove some keys

+ for key in state_dict.copy().keys():

+ if key.startswith("model"):

+ state_dict.pop(key, None)

+

+ # rename some keys

+ state_dict = convert_state_dict(state_dict, config)

+ missing_keys, unexpected_keys = model.load_state_dict(state_dict, strict=False)

+

+ if missing_keys != ["clip.text_model.embeddings.position_ids", "clip.vision_model.embeddings.position_ids"]:

+ raise ValueError("Missing keys that are not expected: {}".format(missing_keys))

+ if unexpected_keys != ["decoder.reduce.weight", "decoder.reduce.bias"]:

+ raise ValueError(f"Unexpected keys: {unexpected_keys}")

+

+ feature_extractor = ViTFeatureExtractor(size=352)

+ tokenizer = CLIPTokenizer.from_pretrained("openai/clip-vit-base-patch32")

+ processor = CLIPSegProcessor(feature_extractor=feature_extractor, tokenizer=tokenizer)

+

+ image = prepare_img()

+ text = ["a glass", "something to fill", "wood", "a jar"]

+

+ inputs = processor(text=text, images=[image] * len(text), padding="max_length", return_tensors="pt")

+

+ with torch.no_grad():

+ outputs = model(**inputs)

+

+ # verify values

+ expected_conditional = torch.tensor([0.1110, -0.1882, 0.1645])

+ expected_pooled_output = torch.tensor([0.2692, -0.7197, -0.1328])

+ if model_name == "clipseg-rd64-refined":

+ expected_masks_slice = torch.tensor(

+ [[-10.0407, -9.9431, -10.2646], [-9.9751, -9.7064, -9.9586], [-9.6891, -9.5645, -9.9618]]

+ )

+ elif model_name == "clipseg-rd64":

+ expected_masks_slice = torch.tensor(

+ [[-7.2877, -7.2711, -7.2463], [-7.2652, -7.2780, -7.2520], [-7.2239, -7.2204, -7.2001]]

+ )

+ elif model_name == "clipseg-rd16":

+ expected_masks_slice = torch.tensor(

+ [[-6.3955, -6.4055, -6.4151], [-6.3911, -6.4033, -6.4100], [-6.3474, -6.3702, -6.3762]]

+ )

+ else:

+ raise ValueError(f"Model name {model_name} not supported.")

+

+ assert torch.allclose(outputs.logits[0, :3, :3], expected_masks_slice, atol=1e-3)

+ assert torch.allclose(outputs.conditional_embeddings[0, :3], expected_conditional, atol=1e-3)

+ assert torch.allclose(outputs.pooled_output[0, :3], expected_pooled_output, atol=1e-3)

+ print("Looks ok!")

+

+ if pytorch_dump_folder_path is not None:

+ print(f"Saving model and processor to {pytorch_dump_folder_path}")

+ model.save_pretrained(pytorch_dump_folder_path)

+ processor.save_pretrained(pytorch_dump_folder_path)

+

+ if push_to_hub:

+ print(f"Pushing model and processor for {model_name} to the hub")

+ model.push_to_hub(f"CIDAS/{model_name}")

+ processor.push_to_hub(f"CIDAS/{model_name}")

+

+

+if __name__ == "__main__":

+ parser = argparse.ArgumentParser()

+ # Required parameters

+ parser.add_argument(

+ "--model_name",

+ default="clipseg-rd64",

+ type=str,

+ choices=["clipseg-rd16", "clipseg-rd64", "clipseg-rd64-refined"],

+ help=(

+ "Name of the model. Supported models are: clipseg-rd64, clipseg-rd16 and clipseg-rd64-refined (rd meaning"

+ " reduce dimension)"

+ ),

+ )

+ parser.add_argument(

+ "--checkpoint_path",

+ default="/Users/nielsrogge/Documents/CLIPSeg/clip_plus_rd64-uni.pth",

+ type=str,

+ help=(

+ "Path to the original checkpoint. Note that the script assumes that the checkpoint includes both CLIP and"

+ " the decoder weights."

+ ),

+ )

+ parser.add_argument(

+ "--pytorch_dump_folder_path", default=None, type=str, help="Path to the output PyTorch model directory."

+ )

+ parser.add_argument(

+ "--push_to_hub", action="store_true", help="Whether or not to push the converted model to the 🤗 hub."

+ )

+

+ args = parser.parse_args()

+ convert_clipseg_checkpoint(args.model_name, args.checkpoint_path, args.pytorch_dump_folder_path, args.push_to_hub)

diff --git a/src/transformers/models/clipseg/modeling_clipseg.py b/src/transformers/models/clipseg/modeling_clipseg.py

new file mode 100644

index 0000000000000..87caf24ed4bf6

--- /dev/null

+++ b/src/transformers/models/clipseg/modeling_clipseg.py

@@ -0,0 +1,1493 @@

+# coding=utf-8

+# Copyright 2022 The OpenAI Team Authors and The HuggingFace Team. All rights reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+""" PyTorch CLIPSeg model."""

+

+import copy

+import math

+from dataclasses import dataclass

+from typing import Any, Optional, Tuple, Union

+

+import torch

+import torch.utils.checkpoint

+from torch import nn

+

+from ...activations import ACT2FN

+from ...modeling_outputs import BaseModelOutput, BaseModelOutputWithPooling

+from ...modeling_utils import PreTrainedModel

+from ...utils import (

+ ModelOutput,

+ add_start_docstrings,

+ add_start_docstrings_to_model_forward,

+ logging,

+ replace_return_docstrings,

+)

+from .configuration_clipseg import CLIPSegConfig, CLIPSegTextConfig, CLIPSegVisionConfig

+

+

+logger = logging.get_logger(__name__)

+

+

+_CHECKPOINT_FOR_DOC = "CIDAS/clipseg-rd64-refined"

+

+CLIPSEG_PRETRAINED_MODEL_ARCHIVE_LIST = [

+ "CIDAS/clipseg-rd64-refined",

+ # See all CLIPSeg models at https://huggingface.co/models?filter=clipseg

+]

+

+

+# Copied from transformers.models.bart.modeling_bart._expand_mask

+def _expand_mask(mask: torch.Tensor, dtype: torch.dtype, tgt_len: Optional[int] = None):

+ """

+ Expands attention_mask from `[bsz, seq_len]` to `[bsz, 1, tgt_seq_len, src_seq_len]`.

+ """

+ bsz, src_len = mask.size()

+ tgt_len = tgt_len if tgt_len is not None else src_len

+

+ expanded_mask = mask[:, None, None, :].expand(bsz, 1, tgt_len, src_len).to(dtype)

+

+ inverted_mask = 1.0 - expanded_mask

+

+ return inverted_mask.masked_fill(inverted_mask.to(torch.bool), torch.finfo(dtype).min)

+

+

+# contrastive loss function, adapted from

+# https://sachinruk.github.io/blog/pytorch/pytorch%20lightning/loss%20function/gpu/2021/03/07/CLIPSeg.html

+def contrastive_loss(logits: torch.Tensor) -> torch.Tensor:

+ return nn.functional.cross_entropy(logits, torch.arange(len(logits), device=logits.device))

+

+

+# Copied from transformers.models.clip.modeling_clip.clip_loss with clip->clipseg

+def clipseg_loss(similarity: torch.Tensor) -> torch.Tensor:

+ caption_loss = contrastive_loss(similarity)

+ image_loss = contrastive_loss(similarity.t())

+ return (caption_loss + image_loss) / 2.0

+

+

+@dataclass

+# Copied from transformers.models.clip.modeling_clip.CLIPOutput with CLIP->CLIPSeg

+class CLIPSegOutput(ModelOutput):

+ """

+ Args:

+ loss (`torch.FloatTensor` of shape `(1,)`, *optional*, returned when `return_loss` is `True`):

+ Contrastive loss for image-text similarity.

+ logits_per_image:(`torch.FloatTensor` of shape `(image_batch_size, text_batch_size)`):

+ The scaled dot product scores between `image_embeds` and `text_embeds`. This represents the image-text

+ similarity scores.

+ logits_per_text:(`torch.FloatTensor` of shape `(text_batch_size, image_batch_size)`):

+ The scaled dot product scores between `text_embeds` and `image_embeds`. This represents the text-image

+ similarity scores.

+ text_embeds(`torch.FloatTensor` of shape `(batch_size, output_dim`):

+ The text embeddings obtained by applying the projection layer to the pooled output of [`CLIPSegTextModel`].

+ image_embeds(`torch.FloatTensor` of shape `(batch_size, output_dim`):

+ The image embeddings obtained by applying the projection layer to the pooled output of

+ [`CLIPSegVisionModel`].

+ text_model_output(`BaseModelOutputWithPooling`):

+ The output of the [`CLIPSegTextModel`].

+ vision_model_output(`BaseModelOutputWithPooling`):

+ The output of the [`CLIPSegVisionModel`].

+ """

+

+ loss: Optional[torch.FloatTensor] = None

+ logits_per_image: torch.FloatTensor = None

+ logits_per_text: torch.FloatTensor = None

+ text_embeds: torch.FloatTensor = None

+ image_embeds: torch.FloatTensor = None

+ text_model_output: BaseModelOutputWithPooling = None

+ vision_model_output: BaseModelOutputWithPooling = None

+

+ def to_tuple(self) -> Tuple[Any]:

+ return tuple(

+ self[k] if k not in ["text_model_output", "vision_model_output"] else getattr(self, k).to_tuple()

+ for k in self.keys()

+ )

+

+

+@dataclass

+class CLIPSegDecoderOutput(ModelOutput):

+ """

+ Args:

+ logits (`torch.FloatTensor` of shape `(batch_size, height, width)`):

+ Classification scores for each pixel.

+ hidden_states (`tuple(torch.FloatTensor)`, *optional*, returned when `output_hidden_states=True` is passed or when `config.output_hidden_states=True`):

+ Tuple of `torch.FloatTensor` (one for the output of the embeddings, if the model has an embedding layer, +

+ one for the output of each layer) of shape `(batch_size, sequence_length, hidden_size)`.

+ attentions (`tuple(torch.FloatTensor)`, *optional*, returned when `output_attentions=True` is passed or when `config.output_attentions=True`):

+ Tuple of `torch.FloatTensor` (one for each layer) of shape `(batch_size, num_heads, sequence_length,

+ sequence_length)`. Attentions weights after the attention softmax, used to compute the weighted average in

+ the self-attention heads.

+ """

+

+ logits: torch.FloatTensor = None

+ hidden_states: Optional[Tuple[torch.FloatTensor]] = None

+ attentions: Optional[Tuple[torch.FloatTensor]] = None

+

+

+@dataclass

+class CLIPSegImageSegmentationOutput(ModelOutput):

+ """

+ Args:

+ loss (`torch.FloatTensor` of shape `(1,)`, *optional*, returned when `return_loss` is `True`):

+ Contrastive loss for image-text similarity.

+ ...

+ vision_model_output (`BaseModelOutputWithPooling`):

+ The output of the [`CLIPSegVisionModel`].

+ """

+

+ loss: Optional[torch.FloatTensor] = None

+ logits: torch.FloatTensor = None

+ conditional_embeddings: torch.FloatTensor = None

+ pooled_output: torch.FloatTensor = None

+ vision_model_output: BaseModelOutputWithPooling = None

+ decoder_output: CLIPSegDecoderOutput = None

+

+ def to_tuple(self) -> Tuple[Any]:

+ return tuple(

+ self[k] if k not in ["vision_model_output", "decoder_output"] else getattr(self, k).to_tuple()

+ for k in self.keys()

+ )

+

+

+class CLIPSegVisionEmbeddings(nn.Module):

+ # Copied from transformers.models.clip.modeling_clip.CLIPVisionEmbeddings.__init__

+ def __init__(self, config: CLIPSegVisionConfig):

+ super().__init__()

+ self.config = config

+ self.embed_dim = config.hidden_size

+ self.image_size = config.image_size

+ self.patch_size = config.patch_size

+

+ self.class_embedding = nn.Parameter(torch.randn(self.embed_dim))

+

+ self.patch_embedding = nn.Conv2d(

+ in_channels=3, out_channels=self.embed_dim, kernel_size=self.patch_size, stride=self.patch_size, bias=False

+ )

+

+ self.num_patches = (self.image_size // self.patch_size) ** 2

+ self.num_positions = self.num_patches + 1

+ self.position_embedding = nn.Embedding(self.num_positions, self.embed_dim)

+ self.register_buffer("position_ids", torch.arange(self.num_positions).expand((1, -1)))

+

+ def interpolate_position_embeddings(self, new_size):

+ if len(new_size) != 2:

+ raise ValueError("new_size should consist of 2 values")

+

+ num_patches_one_direction = int(self.num_patches**0.5)

+ # we interpolate the position embeddings in 2D

+ a = self.position_embedding.weight[1:].T.view(

+ 1, self.config.hidden_size, num_patches_one_direction, num_patches_one_direction

+ )

+ b = (

+ nn.functional.interpolate(a, new_size, mode="bicubic", align_corners=False)

+ .squeeze(0)

+ .view(self.config.hidden_size, new_size[0] * new_size[1])

+ .T

+ )

+ result = torch.cat([self.position_embedding.weight[:1], b])

+

+ return result

+

+ def forward(self, pixel_values: torch.FloatTensor) -> torch.Tensor:

+ batch_size = pixel_values.shape[0]

+ patch_embeds = self.patch_embedding(pixel_values) # shape = [*, width, grid, grid]

+ patch_embeds = patch_embeds.flatten(2).transpose(1, 2)

+

+ class_embeds = self.class_embedding.expand(batch_size, 1, -1)

+ embeddings = torch.cat([class_embeds, patch_embeds], dim=1)

+

+ if embeddings.shape[1] != self.num_positions:

+ new_shape = int(math.sqrt(embeddings.shape[1] - 1))

+ embeddings = embeddings + self.interpolate_position_embeddings((new_shape, new_shape))

+ embeddings = embeddings.to(embeddings.dtype)

+ else:

+ embeddings = embeddings + self.position_embedding(self.position_ids)

+

+ return embeddings

+

+

+# Copied from transformers.models.clip.modeling_clip.CLIPTextEmbeddings with CLIP->CLIPSeg

+class CLIPSegTextEmbeddings(nn.Module):

+ def __init__(self, config: CLIPSegTextConfig):

+ super().__init__()

+ embed_dim = config.hidden_size

+

+ self.token_embedding = nn.Embedding(config.vocab_size, embed_dim)

+ self.position_embedding = nn.Embedding(config.max_position_embeddings, embed_dim)

+

+ # position_ids (1, len position emb) is contiguous in memory and exported when serialized

+ self.register_buffer("position_ids", torch.arange(config.max_position_embeddings).expand((1, -1)))

+

+ def forward(

+ self,

+ input_ids: Optional[torch.LongTensor] = None,

+ position_ids: Optional[torch.LongTensor] = None,

+ inputs_embeds: Optional[torch.FloatTensor] = None,

+ ) -> torch.Tensor:

+ seq_length = input_ids.shape[-1] if input_ids is not None else inputs_embeds.shape[-2]

+

+ if position_ids is None:

+ position_ids = self.position_ids[:, :seq_length]

+

+ if inputs_embeds is None:

+ inputs_embeds = self.token_embedding(input_ids)

+

+ position_embeddings = self.position_embedding(position_ids)

+ embeddings = inputs_embeds + position_embeddings

+

+ return embeddings

+

+

+# Copied from transformers.models.clip.modeling_clip.CLIPAttention with CLIP->CLIPSeg

+class CLIPSegAttention(nn.Module):

+ """Multi-headed attention from 'Attention Is All You Need' paper"""

+

+ def __init__(self, config):

+ super().__init__()

+ self.config = config

+ self.embed_dim = config.hidden_size

+ self.num_heads = config.num_attention_heads

+ self.head_dim = self.embed_dim // self.num_heads

+ if self.head_dim * self.num_heads != self.embed_dim:

+ raise ValueError(

+ f"embed_dim must be divisible by num_heads (got `embed_dim`: {self.embed_dim} and `num_heads`:"

+ f" {self.num_heads})."

+ )

+ self.scale = self.head_dim**-0.5

+ self.dropout = config.attention_dropout

+

+ self.k_proj = nn.Linear(self.embed_dim, self.embed_dim)

+ self.v_proj = nn.Linear(self.embed_dim, self.embed_dim)

+ self.q_proj = nn.Linear(self.embed_dim, self.embed_dim)

+ self.out_proj = nn.Linear(self.embed_dim, self.embed_dim)

+

+ def _shape(self, tensor: torch.Tensor, seq_len: int, bsz: int):

+ return tensor.view(bsz, seq_len, self.num_heads, self.head_dim).transpose(1, 2).contiguous()

+

+ def forward(

+ self,

+ hidden_states: torch.Tensor,

+ attention_mask: Optional[torch.Tensor] = None,

+ causal_attention_mask: Optional[torch.Tensor] = None,

+ output_attentions: Optional[bool] = False,

+ ) -> Tuple[torch.Tensor, Optional[torch.Tensor], Optional[Tuple[torch.Tensor]]]:

+ """Input shape: Batch x Time x Channel"""

+

+ bsz, tgt_len, embed_dim = hidden_states.size()

+

+ # get query proj

+ query_states = self.q_proj(hidden_states) * self.scale

+ key_states = self._shape(self.k_proj(hidden_states), -1, bsz)

+ value_states = self._shape(self.v_proj(hidden_states), -1, bsz)

+

+ proj_shape = (bsz * self.num_heads, -1, self.head_dim)

+ query_states = self._shape(query_states, tgt_len, bsz).view(*proj_shape)

+ key_states = key_states.view(*proj_shape)

+ value_states = value_states.view(*proj_shape)

+

+ src_len = key_states.size(1)

+ attn_weights = torch.bmm(query_states, key_states.transpose(1, 2))

+

+ if attn_weights.size() != (bsz * self.num_heads, tgt_len, src_len):

+ raise ValueError(

+ f"Attention weights should be of size {(bsz * self.num_heads, tgt_len, src_len)}, but is"

+ f" {attn_weights.size()}"

+ )

+

+ # apply the causal_attention_mask first

+ if causal_attention_mask is not None:

+ if causal_attention_mask.size() != (bsz, 1, tgt_len, src_len):

+ raise ValueError(

+ f"Attention mask should be of size {(bsz, 1, tgt_len, src_len)}, but is"

+ f" {causal_attention_mask.size()}"

+ )

+ attn_weights = attn_weights.view(bsz, self.num_heads, tgt_len, src_len) + causal_attention_mask

+ attn_weights = attn_weights.view(bsz * self.num_heads, tgt_len, src_len)

+

+ if attention_mask is not None:

+ if attention_mask.size() != (bsz, 1, tgt_len, src_len):

+ raise ValueError(

+ f"Attention mask should be of size {(bsz, 1, tgt_len, src_len)}, but is {attention_mask.size()}"

+ )

+ attn_weights = attn_weights.view(bsz, self.num_heads, tgt_len, src_len) + attention_mask

+ attn_weights = attn_weights.view(bsz * self.num_heads, tgt_len, src_len)

+

+ attn_weights = nn.functional.softmax(attn_weights, dim=-1)

+

+ if output_attentions:

+ # this operation is a bit akward, but it's required to

+ # make sure that attn_weights keeps its gradient.

+ # In order to do so, attn_weights have to reshaped

+ # twice and have to be reused in the following

+ attn_weights_reshaped = attn_weights.view(bsz, self.num_heads, tgt_len, src_len)

+ attn_weights = attn_weights_reshaped.view(bsz * self.num_heads, tgt_len, src_len)

+ else:

+ attn_weights_reshaped = None

+

+ attn_probs = nn.functional.dropout(attn_weights, p=self.dropout, training=self.training)

+

+ attn_output = torch.bmm(attn_probs, value_states)

+

+ if attn_output.size() != (bsz * self.num_heads, tgt_len, self.head_dim):

+ raise ValueError(

+ f"`attn_output` should be of size {(bsz, self.num_heads, tgt_len, self.head_dim)}, but is"

+ f" {attn_output.size()}"

+ )

+

+ attn_output = attn_output.view(bsz, self.num_heads, tgt_len, self.head_dim)

+ attn_output = attn_output.transpose(1, 2)

+ attn_output = attn_output.reshape(bsz, tgt_len, embed_dim)

+

+ attn_output = self.out_proj(attn_output)

+

+ return attn_output, attn_weights_reshaped

+

+

+# Copied from transformers.models.clip.modeling_clip.CLIPMLP with CLIP->CLIPSeg

+class CLIPSegMLP(nn.Module):

+ def __init__(self, config):

+ super().__init__()

+ self.config = config

+ self.activation_fn = ACT2FN[config.hidden_act]

+ self.fc1 = nn.Linear(config.hidden_size, config.intermediate_size)

+ self.fc2 = nn.Linear(config.intermediate_size, config.hidden_size)

+

+ def forward(self, hidden_states: torch.Tensor) -> torch.Tensor:

+ hidden_states = self.fc1(hidden_states)

+ hidden_states = self.activation_fn(hidden_states)

+ hidden_states = self.fc2(hidden_states)

+ return hidden_states

+

+

+# Copied from transformers.models.clip.modeling_clip.CLIPEncoderLayer with CLIP->CLIPSeg

+class CLIPSegEncoderLayer(nn.Module):

+ def __init__(self, config: CLIPSegConfig):

+ super().__init__()