diff --git a/README.md b/README.md

index 5f89bacf6415d..6b5b95f135668 100644

--- a/README.md

+++ b/README.md

@@ -382,6 +382,7 @@ Current number of checkpoints: ** (from Facebook AI) released with the paper [FAIRSEQ S2T: Fast Speech-to-Text Modeling with FAIRSEQ](https://arxiv.org/abs/2010.05171) by Changhan Wang, Yun Tang, Xutai Ma, Anne Wu, Sravya Popuri, Dmytro Okhonko, Juan Pino.

1. **[Wav2Vec2Phoneme](https://huggingface.co/docs/transformers/model_doc/wav2vec2_phoneme)** (from Facebook AI) released with the paper [Simple and Effective Zero-shot Cross-lingual Phoneme Recognition](https://arxiv.org/abs/2109.11680) by Qiantong Xu, Alexei Baevski, Michael Auli.

1. **[WavLM](https://huggingface.co/docs/transformers/model_doc/wavlm)** (from Microsoft Research) released with the paper [WavLM: Large-Scale Self-Supervised Pre-Training for Full Stack Speech Processing](https://arxiv.org/abs/2110.13900) by Sanyuan Chen, Chengyi Wang, Zhengyang Chen, Yu Wu, Shujie Liu, Zhuo Chen, Jinyu Li, Naoyuki Kanda, Takuya Yoshioka, Xiong Xiao, Jian Wu, Long Zhou, Shuo Ren, Yanmin Qian, Yao Qian, Jian Wu, Michael Zeng, Furu Wei.

+1. **[X-CLIP](https://huggingface.co/docs/transformers/main/model_doc/xclip)** (from Microsoft Research) released with the paper [Expanding Language-Image Pretrained Models for General Video Recognition](https://arxiv.org/abs/2208.02816) by Bolin Ni, Houwen Peng, Minghao Chen, Songyang Zhang, Gaofeng Meng, Jianlong Fu, Shiming Xiang, Haibin Ling.

1. **[XGLM](https://huggingface.co/docs/transformers/model_doc/xglm)** (From Facebook AI) released with the paper [Few-shot Learning with Multilingual Language Models](https://arxiv.org/abs/2112.10668) by Xi Victoria Lin, Todor Mihaylov, Mikel Artetxe, Tianlu Wang, Shuohui Chen, Daniel Simig, Myle Ott, Naman Goyal, Shruti Bhosale, Jingfei Du, Ramakanth Pasunuru, Sam Shleifer, Punit Singh Koura, Vishrav Chaudhary, Brian O'Horo, Jeff Wang, Luke Zettlemoyer, Zornitsa Kozareva, Mona Diab, Veselin Stoyanov, Xian Li.

1. **[XLM](https://huggingface.co/docs/transformers/model_doc/xlm)** (from Facebook) released together with the paper [Cross-lingual Language Model Pretraining](https://arxiv.org/abs/1901.07291) by Guillaume Lample and Alexis Conneau.

1. **[XLM-ProphetNet](https://huggingface.co/docs/transformers/model_doc/xlm-prophetnet)** (from Microsoft Research) released with the paper [ProphetNet: Predicting Future N-gram for Sequence-to-Sequence Pre-training](https://arxiv.org/abs/2001.04063) by Yu Yan, Weizhen Qi, Yeyun Gong, Dayiheng Liu, Nan Duan, Jiusheng Chen, Ruofei Zhang and Ming Zhou.

diff --git a/README_ko.md b/README_ko.md

index cc0b790ad76a8..67add828e4104 100644

--- a/README_ko.md

+++ b/README_ko.md

@@ -334,6 +334,7 @@ Flax, PyTorch, TensorFlow 설치 페이지에서 이들을 conda로 설치하는

1. **[Wav2Vec2-Conformer](https://huggingface.co/docs/transformers/model_doc/wav2vec2-conformer)** (from Facebook AI) released with the paper [FAIRSEQ S2T: Fast Speech-to-Text Modeling with FAIRSEQ](https://arxiv.org/abs/2010.05171) by Changhan Wang, Yun Tang, Xutai Ma, Anne Wu, Sravya Popuri, Dmytro Okhonko, Juan Pino.

1. **[Wav2Vec2Phoneme](https://huggingface.co/docs/transformers/model_doc/wav2vec2_phoneme)** (from Facebook AI) released with the paper [Simple and Effective Zero-shot Cross-lingual Phoneme Recognition](https://arxiv.org/abs/2109.11680) by Qiantong Xu, Alexei Baevski, Michael Auli.

1. **[WavLM](https://huggingface.co/docs/transformers/model_doc/wavlm)** (from Microsoft Research) released with the paper [WavLM: Large-Scale Self-Supervised Pre-Training for Full Stack Speech Processing](https://arxiv.org/abs/2110.13900) by Sanyuan Chen, Chengyi Wang, Zhengyang Chen, Yu Wu, Shujie Liu, Zhuo Chen, Jinyu Li, Naoyuki Kanda, Takuya Yoshioka, Xiong Xiao, Jian Wu, Long Zhou, Shuo Ren, Yanmin Qian, Yao Qian, Jian Wu, Michael Zeng, Furu Wei.

+1. **[X-CLIP](https://huggingface.co/docs/transformers/main/model_doc/xclip)** (from Microsoft Research) released with the paper [Expanding Language-Image Pretrained Models for General Video Recognition](https://arxiv.org/abs/2208.02816) by Bolin Ni, Houwen Peng, Minghao Chen, Songyang Zhang, Gaofeng Meng, Jianlong Fu, Shiming Xiang, Haibin Ling.

1. **[XGLM](https://huggingface.co/docs/transformers/model_doc/xglm)** (From Facebook AI) released with the paper [Few-shot Learning with Multilingual Language Models](https://arxiv.org/abs/2112.10668) by Xi Victoria Lin, Todor Mihaylov, Mikel Artetxe, Tianlu Wang, Shuohui Chen, Daniel Simig, Myle Ott, Naman Goyal, Shruti Bhosale, Jingfei Du, Ramakanth Pasunuru, Sam Shleifer, Punit Singh Koura, Vishrav Chaudhary, Brian O'Horo, Jeff Wang, Luke Zettlemoyer, Zornitsa Kozareva, Mona Diab, Veselin Stoyanov, Xian Li.

1. **[XLM](https://huggingface.co/docs/transformers/model_doc/xlm)** (from Facebook) released together with the paper [Cross-lingual Language Model Pretraining](https://arxiv.org/abs/1901.07291) by Guillaume Lample and Alexis Conneau.

1. **[XLM-ProphetNet](https://huggingface.co/docs/transformers/model_doc/xlm-prophetnet)** (from Microsoft Research) released with the paper [ProphetNet: Predicting Future N-gram for Sequence-to-Sequence Pre-training](https://arxiv.org/abs/2001.04063) by Yu Yan, Weizhen Qi, Yeyun Gong, Dayiheng Liu, Nan Duan, Jiusheng Chen, Ruofei Zhang and Ming Zhou.

diff --git a/README_zh-hans.md b/README_zh-hans.md

index fe2fa45f71f39..4d93d04b7702d 100644

--- a/README_zh-hans.md

+++ b/README_zh-hans.md

@@ -358,6 +358,7 @@ conda install -c huggingface transformers

1. **[Wav2Vec2-Conformer](https://huggingface.co/docs/transformers/model_doc/wav2vec2-conformer)** (来自 Facebook AI) 伴随论文 [FAIRSEQ S2T: Fast Speech-to-Text Modeling with FAIRSEQ](https://arxiv.org/abs/2010.05171) 由 Changhan Wang, Yun Tang, Xutai Ma, Anne Wu, Sravya Popuri, Dmytro Okhonko, Juan Pino 发布。

1. **[Wav2Vec2Phoneme](https://huggingface.co/docs/transformers/model_doc/wav2vec2_phoneme)** (来自 Facebook AI) 伴随论文 [Simple and Effective Zero-shot Cross-lingual Phoneme Recognition](https://arxiv.org/abs/2109.11680) 由 Qiantong Xu, Alexei Baevski, Michael Auli 发布。

1. **[WavLM](https://huggingface.co/docs/transformers/model_doc/wavlm)** (from Microsoft Research) released with the paper [WavLM: Large-Scale Self-Supervised Pre-Training for Full Stack Speech Processing](https://arxiv.org/abs/2110.13900) by Sanyuan Chen, Chengyi Wang, Zhengyang Chen, Yu Wu, Shujie Liu, Zhuo Chen, Jinyu Li, Naoyuki Kanda, Takuya Yoshioka, Xiong Xiao, Jian Wu, Long Zhou, Shuo Ren, Yanmin Qian, Yao Qian, Jian Wu, Michael Zeng, Furu Wei.

+1. **[X-CLIP](https://huggingface.co/docs/transformers/main/model_doc/xclip)** (来自 Microsoft Research) 伴随论文 [Expanding Language-Image Pretrained Models for General Video Recognition](https://arxiv.org/abs/2208.02816) 由 Bolin Ni, Houwen Peng, Minghao Chen, Songyang Zhang, Gaofeng Meng, Jianlong Fu, Shiming Xiang, Haibin Ling 发布。

1. **[XGLM](https://huggingface.co/docs/transformers/model_doc/xglm)** (From Facebook AI) released with the paper [Few-shot Learning with Multilingual Language Models](https://arxiv.org/abs/2112.10668) by Xi Victoria Lin, Todor Mihaylov, Mikel Artetxe, Tianlu Wang, Shuohui Chen, Daniel Simig, Myle Ott, Naman Goyal, Shruti Bhosale, Jingfei Du, Ramakanth Pasunuru, Sam Shleifer, Punit Singh Koura, Vishrav Chaudhary, Brian O'Horo, Jeff Wang, Luke Zettlemoyer, Zornitsa Kozareva, Mona Diab, Veselin Stoyanov, Xian Li.

1. **[XLM](https://huggingface.co/docs/transformers/model_doc/xlm)** (来自 Facebook) 伴随论文 [Cross-lingual Language Model Pretraining](https://arxiv.org/abs/1901.07291) 由 Guillaume Lample and Alexis Conneau 发布。

1. **[XLM-ProphetNet](https://huggingface.co/docs/transformers/model_doc/xlm-prophetnet)** (来自 Microsoft Research) 伴随论文 [ProphetNet: Predicting Future N-gram for Sequence-to-Sequence Pre-training](https://arxiv.org/abs/2001.04063) 由 Yu Yan, Weizhen Qi, Yeyun Gong, Dayiheng Liu, Nan Duan, Jiusheng Chen, Ruofei Zhang and Ming Zhou 发布。

diff --git a/README_zh-hant.md b/README_zh-hant.md

index 4f5a995476149..297478f702b28 100644

--- a/README_zh-hant.md

+++ b/README_zh-hant.md

@@ -370,6 +370,7 @@ conda install -c huggingface transformers

1. **[Wav2Vec2-Conformer](https://huggingface.co/docs/transformers/model_doc/wav2vec2-conformer)** (from Facebook AI) released with the paper [FAIRSEQ S2T: Fast Speech-to-Text Modeling with FAIRSEQ](https://arxiv.org/abs/2010.05171) by Changhan Wang, Yun Tang, Xutai Ma, Anne Wu, Sravya Popuri, Dmytro Okhonko, Juan Pino.

1. **[Wav2Vec2Phoneme](https://huggingface.co/docs/transformers/model_doc/wav2vec2_phoneme)** (from Facebook AI) released with the paper [Simple and Effective Zero-shot Cross-lingual Phoneme Recognition](https://arxiv.org/abs/2109.11680) by Qiantong Xu, Alexei Baevski, Michael Auli.

1. **[WavLM](https://huggingface.co/docs/transformers/model_doc/wavlm)** (from Microsoft Research) released with the paper [WavLM: Large-Scale Self-Supervised Pre-Training for Full Stack Speech Processing](https://arxiv.org/abs/2110.13900) by Sanyuan Chen, Chengyi Wang, Zhengyang Chen, Yu Wu, Shujie Liu, Zhuo Chen, Jinyu Li, Naoyuki Kanda, Takuya Yoshioka, Xiong Xiao, Jian Wu, Long Zhou, Shuo Ren, Yanmin Qian, Yao Qian, Jian Wu, Michael Zeng, Furu Wei.

+1. **[X-CLIP](https://huggingface.co/docs/transformers/main/model_doc/xclip)** (from Microsoft Research) released with the paper [Expanding Language-Image Pretrained Models for General Video Recognition](https://arxiv.org/abs/2208.02816) by Bolin Ni, Houwen Peng, Minghao Chen, Songyang Zhang, Gaofeng Meng, Jianlong Fu, Shiming Xiang, Haibin Ling.

1. **[XGLM](https://huggingface.co/docs/transformers/model_doc/xglm)** (From Facebook AI) released with the paper [Few-shot Learning with Multilingual Language Models](https://arxiv.org/abs/2112.10668) by Xi Victoria Lin, Todor Mihaylov, Mikel Artetxe, Tianlu Wang, Shuohui Chen, Daniel Simig, Myle Ott, Naman Goyal, Shruti Bhosale, Jingfei Du, Ramakanth Pasunuru, Sam Shleifer, Punit Singh Koura, Vishrav Chaudhary, Brian O'Horo, Jeff Wang, Luke Zettlemoyer, Zornitsa Kozareva, Mona Diab, Veselin Stoyanov, Xian Li.

1. **[XLM](https://huggingface.co/docs/transformers/model_doc/xlm)** (from Facebook) released together with the paper [Cross-lingual Language Model Pretraining](https://arxiv.org/abs/1901.07291) by Guillaume Lample and Alexis Conneau.

1. **[XLM-ProphetNet](https://huggingface.co/docs/transformers/model_doc/xlm-prophetnet)** (from Microsoft Research) released with the paper [ProphetNet: Predicting Future N-gram for Sequence-to-Sequence Pre-training](https://arxiv.org/abs/2001.04063) by Yu Yan, Weizhen Qi, Yeyun Gong, Dayiheng Liu, Nan Duan, Jiusheng Chen, Ruofei Zhang and Ming Zhou.

diff --git a/docs/source/en/_toctree.yml b/docs/source/en/_toctree.yml

index 78137d2c8a74c..80d47d6d209e0 100644

--- a/docs/source/en/_toctree.yml

+++ b/docs/source/en/_toctree.yml

@@ -455,6 +455,8 @@

title: Vision Text Dual Encoder

- local: model_doc/visual_bert

title: VisualBERT

+ - local: model_doc/xclip

+ title: X-CLIP

title: Multimodal models

- isExpanded: false

sections:

diff --git a/docs/source/en/index.mdx b/docs/source/en/index.mdx

index ed04cad3dd9bf..3d9578487c380 100644

--- a/docs/source/en/index.mdx

+++ b/docs/source/en/index.mdx

@@ -176,6 +176,7 @@ The library currently contains JAX, PyTorch and TensorFlow implementations, pret

1. **[Wav2Vec2-Conformer](model_doc/wav2vec2-conformer)** (from Facebook AI) released with the paper [FAIRSEQ S2T: Fast Speech-to-Text Modeling with FAIRSEQ](https://arxiv.org/abs/2010.05171) by Changhan Wang, Yun Tang, Xutai Ma, Anne Wu, Sravya Popuri, Dmytro Okhonko, Juan Pino.

1. **[Wav2Vec2Phoneme](model_doc/wav2vec2_phoneme)** (from Facebook AI) released with the paper [Simple and Effective Zero-shot Cross-lingual Phoneme Recognition](https://arxiv.org/abs/2109.11680) by Qiantong Xu, Alexei Baevski, Michael Auli.

1. **[WavLM](model_doc/wavlm)** (from Microsoft Research) released with the paper [WavLM: Large-Scale Self-Supervised Pre-Training for Full Stack Speech Processing](https://arxiv.org/abs/2110.13900) by Sanyuan Chen, Chengyi Wang, Zhengyang Chen, Yu Wu, Shujie Liu, Zhuo Chen, Jinyu Li, Naoyuki Kanda, Takuya Yoshioka, Xiong Xiao, Jian Wu, Long Zhou, Shuo Ren, Yanmin Qian, Yao Qian, Jian Wu, Michael Zeng, Furu Wei.

+1. **[X-CLIP](model_doc/xclip)** (from Microsoft Research) released with the paper [Expanding Language-Image Pretrained Models for General Video Recognition](https://arxiv.org/abs/2208.02816) by Bolin Ni, Houwen Peng, Minghao Chen, Songyang Zhang, Gaofeng Meng, Jianlong Fu, Shiming Xiang, Haibin Ling.

1. **[XGLM](model_doc/xglm)** (From Facebook AI) released with the paper [Few-shot Learning with Multilingual Language Models](https://arxiv.org/abs/2112.10668) by Xi Victoria Lin, Todor Mihaylov, Mikel Artetxe, Tianlu Wang, Shuohui Chen, Daniel Simig, Myle Ott, Naman Goyal, Shruti Bhosale, Jingfei Du, Ramakanth Pasunuru, Sam Shleifer, Punit Singh Koura, Vishrav Chaudhary, Brian O'Horo, Jeff Wang, Luke Zettlemoyer, Zornitsa Kozareva, Mona Diab, Veselin Stoyanov, Xian Li.

1. **[XLM](model_doc/xlm)** (from Facebook) released together with the paper [Cross-lingual Language Model Pretraining](https://arxiv.org/abs/1901.07291) by Guillaume Lample and Alexis Conneau.

1. **[XLM-ProphetNet](model_doc/xlm-prophetnet)** (from Microsoft Research) released with the paper [ProphetNet: Predicting Future N-gram for Sequence-to-Sequence Pre-training](https://arxiv.org/abs/2001.04063) by Yu Yan, Weizhen Qi, Yeyun Gong, Dayiheng Liu, Nan Duan, Jiusheng Chen, Ruofei Zhang and Ming Zhou.

@@ -312,6 +313,7 @@ Flax), PyTorch, and/or TensorFlow.

| Wav2Vec2 | ✅ | ❌ | ✅ | ✅ | ✅ |

| Wav2Vec2-Conformer | ❌ | ❌ | ✅ | ❌ | ❌ |

| WavLM | ❌ | ❌ | ✅ | ❌ | ❌ |

+| X-CLIP | ❌ | ❌ | ✅ | ❌ | ❌ |

| XGLM | ✅ | ✅ | ✅ | ✅ | ✅ |

| XLM | ✅ | ❌ | ✅ | ✅ | ❌ |

| XLM-ProphetNet | ✅ | ❌ | ✅ | ❌ | ❌ |

diff --git a/docs/source/en/model_doc/xclip.mdx b/docs/source/en/model_doc/xclip.mdx

new file mode 100644

index 0000000000000..4d572b6760071

--- /dev/null

+++ b/docs/source/en/model_doc/xclip.mdx

@@ -0,0 +1,69 @@

+

+

+# X-CLIP

+

+## Overview

+

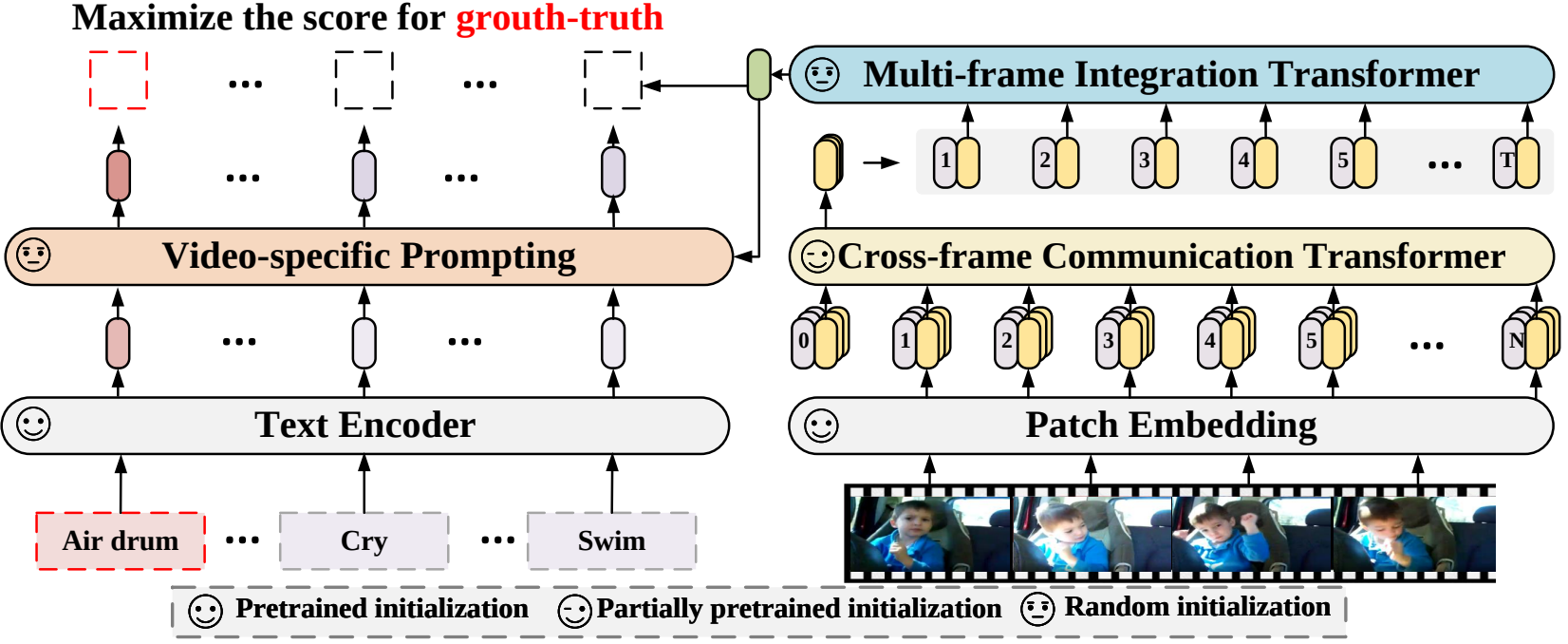

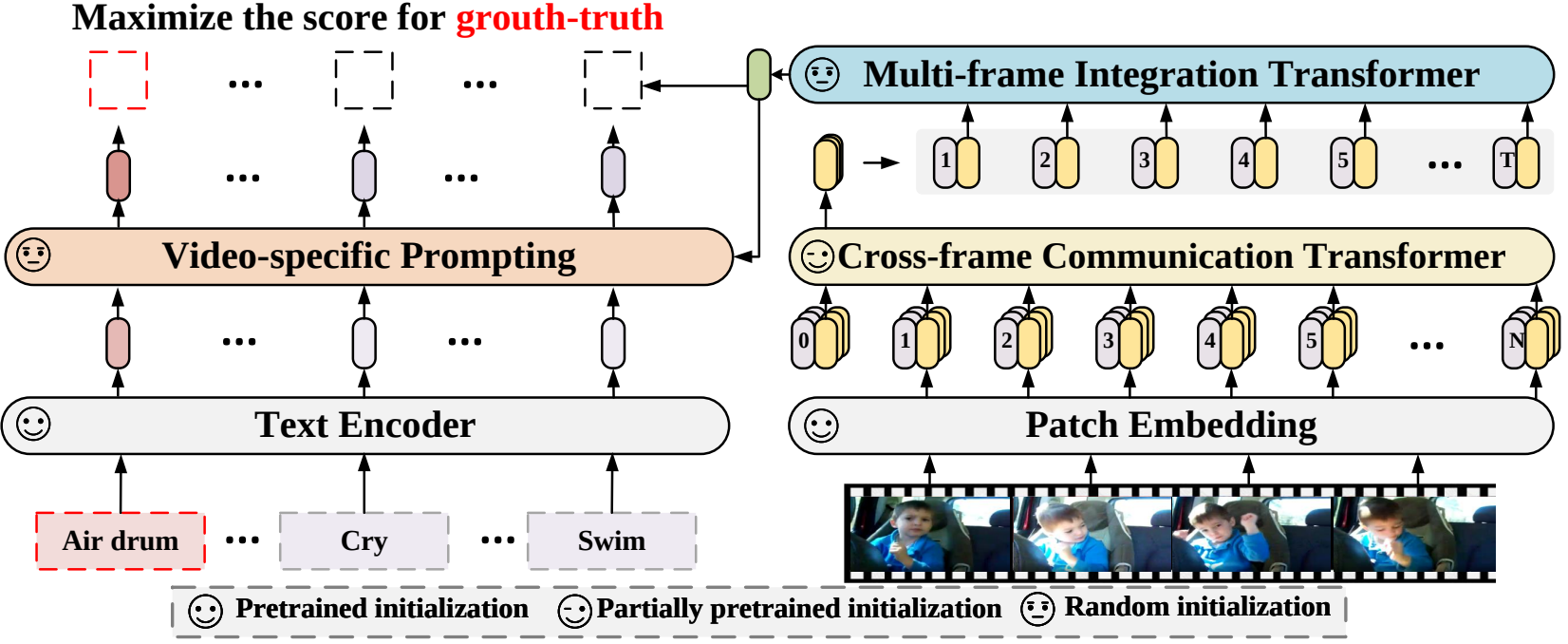

+The X-CLIP model was proposed in [Expanding Language-Image Pretrained Models for General Video Recognition](https://arxiv.org/abs/2208.02816) by Bolin Ni, Houwen Peng, Minghao Chen, Songyang Zhang, Gaofeng Meng, Jianlong Fu, Shiming Xiang, Haibin Ling.

+X-CLIP is a minimal extension of [CLIP](clip) for video. The model consists of a text encoder, a cross-frame vision encoder, a multi-frame integration Transformer, and a video-specific prompt generator.

+

+The abstract from the paper is the following:

+

+*Contrastive language-image pretraining has shown great success in learning visual-textual joint representation from web-scale data, demonstrating remarkable "zero-shot" generalization ability for various image tasks. However, how to effectively expand such new language-image pretraining methods to video domains is still an open problem. In this work, we present a simple yet effective approach that adapts the pretrained language-image models to video recognition directly, instead of pretraining a new model from scratch. More concretely, to capture the long-range dependencies of frames along the temporal dimension, we propose a cross-frame attention mechanism that explicitly exchanges information across frames. Such module is lightweight and can be plugged into pretrained language-image models seamlessly. Moreover, we propose a video-specific prompting scheme, which leverages video content information for generating discriminative textual prompts. Extensive experiments demonstrate that our approach is effective and can be generalized to different video recognition scenarios. In particular, under fully-supervised settings, our approach achieves a top-1 accuracy of 87.1% on Kinectics-400, while using 12 times fewer FLOPs compared with Swin-L and ViViT-H. In zero-shot experiments, our approach surpasses the current state-of-the-art methods by +7.6% and +14.9% in terms of top-1 accuracy under two popular protocols. In few-shot scenarios, our approach outperforms previous best methods by +32.1% and +23.1% when the labeled data is extremely limited.*

+

+Tips:

+

+- Usage of X-CLIP is identical to CLIP.

+

+ +

+ X-CLIP architecture. Taken from the original paper.

+

+This model was contributed by [nielsr](https://huggingface.co/nielsr).

+The original code can be found [here](https://github.com/microsoft/VideoX/tree/master/X-CLIP).

+

+

+## XCLIPProcessor

+

+[[autodoc]] XCLIPProcessor

+

+## XCLIPConfig

+

+[[autodoc]] XCLIPConfig

+ - from_text_vision_configs

+

+## XCLIPTextConfig

+

+[[autodoc]] XCLIPTextConfig

+

+## XCLIPVisionConfig

+

+[[autodoc]] XCLIPVisionConfig

+

+## XCLIPModel

+

+[[autodoc]] XCLIPModel

+ - forward

+ - get_text_features

+ - get_video_features

+

+## XCLIPTextModel

+

+[[autodoc]] XCLIPTextModel

+ - forward

+

+## XCLIPVisionModel

+

+[[autodoc]] XCLIPVisionModel

+ - forward

diff --git a/src/transformers/__init__.py b/src/transformers/__init__.py

index aff905b97ec5d..2b25df3250a68 100755

--- a/src/transformers/__init__.py

+++ b/src/transformers/__init__.py

@@ -165,6 +165,7 @@

"models.clip": [

"CLIP_PRETRAINED_CONFIG_ARCHIVE_MAP",

"CLIPConfig",

+ "CLIPProcessor",

"CLIPTextConfig",

"CLIPTokenizer",

"CLIPVisionConfig",

@@ -367,6 +368,13 @@

"WAVLM_PRETRAINED_CONFIG_ARCHIVE_MAP",

"WavLMConfig",

],

+ "models.x_clip": [

+ "XCLIP_PRETRAINED_CONFIG_ARCHIVE_MAP",

+ "XCLIPConfig",

+ "XCLIPProcessor",

+ "XCLIPTextConfig",

+ "XCLIPVisionConfig",

+ ],

"models.xglm": ["XGLM_PRETRAINED_CONFIG_ARCHIVE_MAP", "XGLMConfig"],

"models.xlm": ["XLM_PRETRAINED_CONFIG_ARCHIVE_MAP", "XLMConfig", "XLMTokenizer"],

"models.xlm_prophetnet": ["XLM_PROPHETNET_PRETRAINED_CONFIG_ARCHIVE_MAP", "XLMProphetNetConfig"],

@@ -638,7 +646,6 @@

_import_structure["image_utils"] = ["ImageFeatureExtractionMixin"]

_import_structure["models.beit"].append("BeitFeatureExtractor")

_import_structure["models.clip"].append("CLIPFeatureExtractor")

- _import_structure["models.clip"].append("CLIPProcessor")

_import_structure["models.convnext"].append("ConvNextFeatureExtractor")

_import_structure["models.deit"].append("DeiTFeatureExtractor")

_import_structure["models.detr"].append("DetrFeatureExtractor")

@@ -983,6 +990,15 @@

"CLIPVisionModel",

]

)

+ _import_structure["models.x_clip"].extend(

+ [

+ "XCLIP_PRETRAINED_MODEL_ARCHIVE_LIST",

+ "XCLIPModel",

+ "XCLIPPreTrainedModel",

+ "XCLIPTextModel",

+ "XCLIPVisionModel",

+ ]

+ )

_import_structure["models.convbert"].extend(

[

"CONVBERT_PRETRAINED_MODEL_ARCHIVE_LIST",

@@ -2988,6 +3004,7 @@

from .models.clip import (

CLIP_PRETRAINED_CONFIG_ARCHIVE_MAP,

CLIPConfig,

+ CLIPProcessor,

CLIPTextConfig,

CLIPTokenizer,

CLIPVisionConfig,

@@ -3164,6 +3181,13 @@

from .models.wav2vec2_phoneme import Wav2Vec2PhonemeCTCTokenizer

from .models.wav2vec2_with_lm import Wav2Vec2ProcessorWithLM

from .models.wavlm import WAVLM_PRETRAINED_CONFIG_ARCHIVE_MAP, WavLMConfig

+ from .models.x_clip import (

+ XCLIP_PRETRAINED_CONFIG_ARCHIVE_MAP,

+ XCLIPConfig,

+ XCLIPProcessor,

+ XCLIPTextConfig,

+ XCLIPVisionConfig,

+ )

from .models.xglm import XGLM_PRETRAINED_CONFIG_ARCHIVE_MAP, XGLMConfig

from .models.xlm import XLM_PRETRAINED_CONFIG_ARCHIVE_MAP, XLMConfig, XLMTokenizer

from .models.xlm_prophetnet import XLM_PROPHETNET_PRETRAINED_CONFIG_ARCHIVE_MAP, XLMProphetNetConfig

@@ -3401,7 +3425,7 @@

else:

from .image_utils import ImageFeatureExtractionMixin

from .models.beit import BeitFeatureExtractor

- from .models.clip import CLIPFeatureExtractor, CLIPProcessor

+ from .models.clip import CLIPFeatureExtractor

from .models.convnext import ConvNextFeatureExtractor

from .models.deit import DeiTFeatureExtractor

from .models.detr import DetrFeatureExtractor

@@ -4464,6 +4488,13 @@

WavLMModel,

WavLMPreTrainedModel,

)

+ from .models.x_clip import (

+ XCLIP_PRETRAINED_MODEL_ARCHIVE_LIST,

+ XCLIPModel,

+ XCLIPPreTrainedModel,

+ XCLIPTextModel,

+ XCLIPVisionModel,

+ )

from .models.xglm import XGLM_PRETRAINED_MODEL_ARCHIVE_LIST, XGLMForCausalLM, XGLMModel, XGLMPreTrainedModel

from .models.xlm import (

XLM_PRETRAINED_MODEL_ARCHIVE_LIST,

diff --git a/src/transformers/models/__init__.py b/src/transformers/models/__init__.py

index fdf315b2257d8..9db07572a65d6 100644

--- a/src/transformers/models/__init__.py

+++ b/src/transformers/models/__init__.py

@@ -150,6 +150,7 @@

wav2vec2_phoneme,

wav2vec2_with_lm,

wavlm,

+ x_clip,

xglm,

xlm,

xlm_prophetnet,

diff --git a/src/transformers/models/auto/configuration_auto.py b/src/transformers/models/auto/configuration_auto.py

index c9e6156a3843d..1b6daca6e45a8 100644

--- a/src/transformers/models/auto/configuration_auto.py

+++ b/src/transformers/models/auto/configuration_auto.py

@@ -143,6 +143,7 @@

("wav2vec2", "Wav2Vec2Config"),

("wav2vec2-conformer", "Wav2Vec2ConformerConfig"),

("wavlm", "WavLMConfig"),

+ ("xclip", "XCLIPConfig"),

("xglm", "XGLMConfig"),

("xlm", "XLMConfig"),

("xlm-prophetnet", "XLMProphetNetConfig"),

@@ -257,6 +258,7 @@

("vit_mae", "VIT_MAE_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("wav2vec2", "WAV_2_VEC_2_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("wav2vec2-conformer", "WAV2VEC2_CONFORMER_PRETRAINED_CONFIG_ARCHIVE_MAP"),

+ ("xclip", "X_CLIP_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("xglm", "XGLM_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("xlm", "XLM_PRETRAINED_CONFIG_ARCHIVE_MAP"),

("xlm-prophetnet", "XLM_PROPHETNET_PRETRAINED_CONFIG_ARCHIVE_MAP"),

@@ -405,6 +407,7 @@

("wav2vec2-conformer", "Wav2Vec2-Conformer"),

("wav2vec2_phoneme", "Wav2Vec2Phoneme"),

("wavlm", "WavLM"),

+ ("xclip", "X-CLIP"),

("xglm", "XGLM"),

("xlm", "XLM"),

("xlm-prophetnet", "XLM-ProphetNet"),

@@ -425,6 +428,7 @@

("data2vec-text", "data2vec"),

("data2vec-vision", "data2vec"),

("donut-swin", "donut"),

+ ("xclip", "x_clip"),

]

)

diff --git a/src/transformers/models/auto/feature_extraction_auto.py b/src/transformers/models/auto/feature_extraction_auto.py

index 3058aaa4334a2..625b79db06494 100644

--- a/src/transformers/models/auto/feature_extraction_auto.py

+++ b/src/transformers/models/auto/feature_extraction_auto.py

@@ -75,6 +75,7 @@

("vit_mae", "ViTFeatureExtractor"),

("wav2vec2", "Wav2Vec2FeatureExtractor"),

("wav2vec2-conformer", "Wav2Vec2FeatureExtractor"),

+ ("xclip", "CLIPFeatureExtractor"),

("yolos", "YolosFeatureExtractor"),

]

)

diff --git a/src/transformers/models/auto/modeling_auto.py b/src/transformers/models/auto/modeling_auto.py

index 0e026cb48d0c0..ea27d9ee87d9f 100644

--- a/src/transformers/models/auto/modeling_auto.py

+++ b/src/transformers/models/auto/modeling_auto.py

@@ -137,6 +137,7 @@

("wav2vec2", "Wav2Vec2Model"),

("wav2vec2-conformer", "Wav2Vec2ConformerModel"),

("wavlm", "WavLMModel"),

+ ("xclip", "XCLIPModel"),

("xglm", "XGLMModel"),

("xlm", "XLMModel"),

("xlm-prophetnet", "XLMProphetNetModel"),

diff --git a/src/transformers/models/auto/processing_auto.py b/src/transformers/models/auto/processing_auto.py

index c6f4fd98316a4..7eff84c5d5671 100644

--- a/src/transformers/models/auto/processing_auto.py

+++ b/src/transformers/models/auto/processing_auto.py

@@ -58,6 +58,7 @@

("wav2vec2-conformer", "Wav2Vec2Processor"),

("wav2vec2_with_lm", "Wav2Vec2ProcessorWithLM"),

("wavlm", "Wav2Vec2Processor"),

+ ("xclip", "CLIPProcessor"),

]

)

diff --git a/src/transformers/models/auto/tokenization_auto.py b/src/transformers/models/auto/tokenization_auto.py

index 8ece13b79fe3f..9eb802b1fb1d8 100644

--- a/src/transformers/models/auto/tokenization_auto.py

+++ b/src/transformers/models/auto/tokenization_auto.py

@@ -253,6 +253,7 @@

("wav2vec2", ("Wav2Vec2CTCTokenizer", None)),

("wav2vec2-conformer", ("Wav2Vec2CTCTokenizer", None)),

("wav2vec2_phoneme", ("Wav2Vec2PhonemeCTCTokenizer", None)),

+ ("xclip", ("CLIPTokenizer", "CLIPTokenizerFast" if is_tokenizers_available() else None)),

(

"xglm",

(

diff --git a/src/transformers/models/clip/__init__.py b/src/transformers/models/clip/__init__.py

index 932130f8d5fdf..637d78b0da799 100644

--- a/src/transformers/models/clip/__init__.py

+++ b/src/transformers/models/clip/__init__.py

@@ -36,6 +36,7 @@

"CLIPTextConfig",

"CLIPVisionConfig",

],

+ "processing_clip": ["CLIPProcessor"],

"tokenization_clip": ["CLIPTokenizer"],

}

@@ -54,7 +55,6 @@

pass

else:

_import_structure["feature_extraction_clip"] = ["CLIPFeatureExtractor"]

- _import_structure["processing_clip"] = ["CLIPProcessor"]

try:

if not is_torch_available():

@@ -108,6 +108,7 @@

CLIPTextConfig,

CLIPVisionConfig,

)

+ from .processing_clip import CLIPProcessor

from .tokenization_clip import CLIPTokenizer

try:

@@ -125,7 +126,6 @@

pass

else:

from .feature_extraction_clip import CLIPFeatureExtractor

- from .processing_clip import CLIPProcessor

try:

if not is_torch_available():

diff --git a/src/transformers/models/x_clip/__init__.py b/src/transformers/models/x_clip/__init__.py

new file mode 100644

index 0000000000000..10d848b7bc4e6

--- /dev/null

+++ b/src/transformers/models/x_clip/__init__.py

@@ -0,0 +1,73 @@

+# flake8: noqa

+# There's no way to ignore "F401 '...' imported but unused" warnings in this

+# module, but to preserve other warnings. So, don't check this module at all.

+

+# Copyright 2022 The HuggingFace Team. All rights reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+from typing import TYPE_CHECKING

+

+from ...utils import OptionalDependencyNotAvailable, _LazyModule, is_torch_available

+

+

+_import_structure = {

+ "configuration_x_clip": [

+ "XCLIP_PRETRAINED_CONFIG_ARCHIVE_MAP",

+ "XCLIPConfig",

+ "XCLIPTextConfig",

+ "XCLIPVisionConfig",

+ ],

+ "processing_x_clip": ["XCLIPProcessor"],

+}

+

+try:

+ if not is_torch_available():

+ raise OptionalDependencyNotAvailable()

+except OptionalDependencyNotAvailable:

+ pass

+else:

+ _import_structure["modeling_x_clip"] = [

+ "XCLIP_PRETRAINED_MODEL_ARCHIVE_LIST",

+ "XCLIPModel",

+ "XCLIPPreTrainedModel",

+ "XCLIPTextModel",

+ "XCLIPVisionModel",

+ ]

+

+if TYPE_CHECKING:

+ from .configuration_x_clip import (

+ XCLIP_PRETRAINED_CONFIG_ARCHIVE_MAP,

+ XCLIPConfig,

+ XCLIPTextConfig,

+ XCLIPVisionConfig,

+ )

+ from .processing_x_clip import XCLIPProcessor

+

+ try:

+ if not is_torch_available():

+ raise OptionalDependencyNotAvailable()

+ except OptionalDependencyNotAvailable:

+ pass

+ else:

+ from .modeling_x_clip import (

+ XCLIP_PRETRAINED_MODEL_ARCHIVE_LIST,

+ XCLIPModel,

+ XCLIPPreTrainedModel,

+ XCLIPTextModel,

+ XCLIPVisionModel,

+ )

+

+else:

+ import sys

+

+ sys.modules[__name__] = _LazyModule(__name__, globals()["__file__"], _import_structure, module_spec=__spec__)

diff --git a/src/transformers/models/x_clip/configuration_x_clip.py b/src/transformers/models/x_clip/configuration_x_clip.py

new file mode 100644

index 0000000000000..30f9214eb8b4b

--- /dev/null

+++ b/src/transformers/models/x_clip/configuration_x_clip.py

@@ -0,0 +1,368 @@

+# coding=utf-8

+# Copyright 2022 The HuggingFace Inc. team. All rights reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+""" X-CLIP model configuration"""

+

+import copy

+import os

+from typing import Union

+

+from ...configuration_utils import PretrainedConfig

+from ...utils import logging

+

+

+logger = logging.get_logger(__name__)

+

+XCLIP_PRETRAINED_CONFIG_ARCHIVE_MAP = {

+ "microsoft/xclip-base-patch32": "https://huggingface.co/microsoft/xclip-base-patch32/resolve/main/config.json",

+}

+

+

+class XCLIPTextConfig(PretrainedConfig):

+ r"""

+ This is the configuration class to store the configuration of a [`XCLIPModel`]. It is used to instantiate an X-CLIP

+ model according to the specified arguments, defining the model architecture. Instantiating a configuration with the

+ defaults will yield a similar configuration to that of the X-CLIP

+ [microsoft/xclip-base-patch32](https://huggingface.co/microsoft/xclip-base-patch32) architecture.

+

+ Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

+ documentation from [`PretrainedConfig`] for more information.

+

+

+ Args:

+ vocab_size (`int`, *optional*, defaults to 49408):

+ Vocabulary size of the X-CLIP text model. Defines the number of different tokens that can be represented by

+ the `inputs_ids` passed when calling [`XCLIPModel`].

+ hidden_size (`int`, *optional*, defaults to 512):

+ Dimensionality of the encoder layers and the pooler layer.

+ intermediate_size (`int`, *optional*, defaults to 2048):

+ Dimensionality of the "intermediate" (i.e., feed-forward) layer in the Transformer encoder.

+ num_hidden_layers (`int`, *optional*, defaults to 12):

+ Number of hidden layers in the Transformer encoder.

+ num_attention_heads (`int`, *optional*, defaults to 8):

+ Number of attention heads for each attention layer in the Transformer encoder.

+ max_position_embeddings (`int`, *optional*, defaults to 77):

+ The maximum sequence length that this model might ever be used with. Typically set this to something large

+ just in case (e.g., 512 or 1024 or 2048).

+ hidden_act (`str` or `function`, *optional*, defaults to `"quick_gelu"`):

+ The non-linear activation function (function or string) in the encoder and pooler. If string, `"gelu"`,

+ `"relu"`, `"selu"` and `"gelu_new"` ``"quick_gelu"` are supported.

+ layer_norm_eps (`float`, *optional*, defaults to 1e-5):

+ The epsilon used by the layer normalization layers.

+ attention_dropout (`float`, *optional*, defaults to 0.0):

+ The dropout ratio for the attention probabilities.

+ dropout (`float`, *optional*, defaults to 0.0):

+ The dropout probabilitiy for all fully connected layers in the embeddings, encoder, and pooler.

+ initializer_range (`float`, *optional*, defaults to 0.02):

+ The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

+ initializer_factor (`float``, *optional*, defaults to 1):

+ A factor for initializing all weight matrices (should be kept to 1, used internally for initialization

+ testing).

+

+ Example:

+

+ ```python

+ >>> from transformers import XCLIPTextModel, XCLIPTextConfig

+

+ >>> # Initializing a XCLIPTextModel with microsoft/xclip-base-patch32 style configuration

+ >>> configuration = XCLIPTextConfig()

+

+ >>> # Initializing a XCLIPTextConfig from the microsoft/xclip-base-patch32 style configuration

+ >>> model = XCLIPTextModel(configuration)

+

+ >>> # Accessing the model configuration

+ >>> configuration = model.config

+ ```"""

+ model_type = "xclip_text_model"

+

+ def __init__(

+ self,

+ vocab_size=49408,

+ hidden_size=512,

+ intermediate_size=2048,

+ num_hidden_layers=12,

+ num_attention_heads=8,

+ max_position_embeddings=77,

+ hidden_act="quick_gelu",

+ layer_norm_eps=0.00001,

+ dropout=0.0,

+ attention_dropout=0.0,

+ initializer_range=0.02,

+ initializer_factor=1.0,

+ pad_token_id=1,

+ bos_token_id=0,

+ eos_token_id=2,

+ **kwargs

+ ):

+ super().__init__(pad_token_id=pad_token_id, bos_token_id=bos_token_id, eos_token_id=eos_token_id, **kwargs)

+

+ self.vocab_size = vocab_size

+ self.hidden_size = hidden_size

+ self.intermediate_size = intermediate_size

+ self.dropout = dropout

+ self.num_hidden_layers = num_hidden_layers

+ self.num_attention_heads = num_attention_heads

+ self.max_position_embeddings = max_position_embeddings

+ self.layer_norm_eps = layer_norm_eps

+ self.hidden_act = hidden_act

+ self.initializer_range = initializer_range

+ self.initializer_factor = initializer_factor

+ self.attention_dropout = attention_dropout

+

+ @classmethod

+ def from_pretrained(cls, pretrained_model_name_or_path: Union[str, os.PathLike], **kwargs) -> "PretrainedConfig":

+

+ config_dict, kwargs = cls.get_config_dict(pretrained_model_name_or_path, **kwargs)

+

+ # get the text config dict if we are loading from XCLIPConfig

+ if config_dict.get("model_type") == "xclip":

+ config_dict = config_dict["text_config"]

+

+ if "model_type" in config_dict and hasattr(cls, "model_type") and config_dict["model_type"] != cls.model_type:

+ logger.warning(

+ f"You are using a model of type {config_dict['model_type']} to instantiate a model of type "

+ f"{cls.model_type}. This is not supported for all configurations of models and can yield errors."

+ )

+

+ return cls.from_dict(config_dict, **kwargs)

+

+

+class XCLIPVisionConfig(PretrainedConfig):

+ r"""

+ This is the configuration class to store the configuration of a [`XCLIPModel`]. It is used to instantiate an X-CLIP

+ model according to the specified arguments, defining the model architecture. Instantiating a configuration with the

+ defaults will yield a similar configuration to that of the X-CLIP

+ [microsoft/xclip-base-patch32](https://huggingface.co/microsoft/xclip-base-patch32) architecture.

+

+ Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

+ documentation from [`PretrainedConfig`] for more information.

+

+

+ Args:

+ hidden_size (`int`, *optional*, defaults to 768):

+ Dimensionality of the encoder layers and the pooler layer.

+ intermediate_size (`int`, *optional*, defaults to 3072):

+ Dimensionality of the "intermediate" (i.e., feed-forward) layer in the Transformer encoder.

+ num_hidden_layers (`int`, *optional*, defaults to 12):

+ Number of hidden layers in the Transformer encoder.

+ num_attention_heads (`int`, *optional*, defaults to 12):

+ Number of attention heads for each attention layer in the Transformer encoder.

+ mit_hidden_size (`int`, *optional*, defaults to 512):

+ Dimensionality of the encoder layers of the Multiframe Integration Transformer (MIT).

+ mit_intermediate_size (`int`, *optional*, defaults to 2048):

+ Dimensionality of the "intermediate" (i.e., feed-forward) layer in the Multiframe Integration Transformer

+ (MIT).

+ mit_num_hidden_layers (`int`, *optional*, defaults to 1):

+ Number of hidden layers in the Multiframe Integration Transformer (MIT).

+ mit_num_attention_heads (`int`, *optional*, defaults to 8):

+ Number of attention heads for each attention layer in the Multiframe Integration Transformer (MIT).

+ image_size (`int`, *optional*, defaults to 224):

+ The size (resolution) of each image.

+ patch_size (`int`, *optional*, defaults to 32):

+ The size (resolution) of each patch.

+ hidden_act (`str` or `function`, *optional*, defaults to `"quick_gelu"`):

+ The non-linear activation function (function or string) in the encoder and pooler. If string, `"gelu"`,

+ `"relu"`, `"selu"`, `"gelu_new"` and ``"quick_gelu"` are supported.

+ layer_norm_eps (`float`, *optional*, defaults to 1e-5):

+ The epsilon used by the layer normalization layers.

+ dropout (`float`, *optional*, defaults to 0.0):

+ The dropout probabilitiy for all fully connected layers in the embeddings, encoder, and pooler.

+ attention_dropout (`float`, *optional*, defaults to 0.0):

+ The dropout ratio for the attention probabilities.

+ initializer_range (`float`, *optional*, defaults to 0.02):

+ The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

+ initializer_factor (`float``, *optional*, defaults to 1):

+ A factor for initializing all weight matrices (should be kept to 1, used internally for initialization

+ testing).

+ drop_path_rate (`float`, *optional*, defaults to 0.0):

+ Stochastic depth rate.

+

+ Example:

+

+ ```python

+ >>> from transformers import XCLIPVisionModel, XCLIPVisionConfig

+

+ >>> # Initializing a XCLIPVisionModel with microsoft/xclip-base-patch32 style configuration

+ >>> configuration = XCLIPVisionConfig()

+

+ >>> # Initializing a XCLIPVisionModel model from the microsoft/xclip-base-patch32 style configuration

+ >>> model = XCLIPVisionModel(configuration)

+

+ >>> # Accessing the model configuration

+ >>> configuration = model.config

+ ```"""

+

+ model_type = "xclip_vision_model"

+

+ def __init__(

+ self,

+ hidden_size=768,

+ intermediate_size=3072,

+ num_hidden_layers=12,

+ num_attention_heads=12,

+ mit_hidden_size=512,

+ mit_intermediate_size=2048,

+ mit_num_hidden_layers=1,

+ mit_num_attention_heads=8,

+ num_channels=3,

+ image_size=224,

+ patch_size=32,

+ num_frames=8,

+ hidden_act="quick_gelu",

+ layer_norm_eps=0.00001,

+ dropout=0.0,

+ attention_dropout=0.0,

+ initializer_range=0.02,

+ initializer_factor=1.0,

+ drop_path_rate=0.0,

+ **kwargs

+ ):

+ super().__init__(**kwargs)

+

+ self.hidden_size = hidden_size

+ self.intermediate_size = intermediate_size

+ self.dropout = dropout

+ self.num_hidden_layers = num_hidden_layers

+ self.num_attention_heads = num_attention_heads

+ self.mit_hidden_size = mit_hidden_size

+ self.mit_intermediate_size = mit_intermediate_size

+ self.mit_num_hidden_layers = mit_num_hidden_layers

+ self.mit_num_attention_heads = mit_num_attention_heads

+ self.num_channels = num_channels

+ self.patch_size = patch_size

+ self.num_frames = num_frames

+ self.image_size = image_size

+ self.initializer_range = initializer_range

+ self.initializer_factor = initializer_factor

+ self.attention_dropout = attention_dropout

+ self.layer_norm_eps = layer_norm_eps

+ self.hidden_act = hidden_act

+ self.drop_path_rate = drop_path_rate

+

+ @classmethod

+ def from_pretrained(cls, pretrained_model_name_or_path: Union[str, os.PathLike], **kwargs) -> "PretrainedConfig":

+

+ config_dict, kwargs = cls.get_config_dict(pretrained_model_name_or_path, **kwargs)

+

+ # get the vision config dict if we are loading from XCLIPConfig

+ if config_dict.get("model_type") == "xclip":

+ config_dict = config_dict["vision_config"]

+

+ if "model_type" in config_dict and hasattr(cls, "model_type") and config_dict["model_type"] != cls.model_type:

+ logger.warning(

+ f"You are using a model of type {config_dict['model_type']} to instantiate a model of type "

+ f"{cls.model_type}. This is not supported for all configurations of models and can yield errors."

+ )

+

+ return cls.from_dict(config_dict, **kwargs)

+

+

+class XCLIPConfig(PretrainedConfig):

+ r"""

+ [`XCLIPConfig`] is the configuration class to store the configuration of a [`XCLIPModel`]. It is used to

+ instantiate X-CLIP model according to the specified arguments, defining the text model and vision model configs.

+

+ Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

+ documentation from [`PretrainedConfig`] for more information.

+

+ Args:

+ text_config_dict (`dict`, *optional*):

+ Dictionary of configuration options used to initialize [`XCLIPTextConfig`].

+ vision_config_dict (`dict`, *optional*):

+ Dictionary of configuration options used to initialize [`XCLIPVisionConfig`].

+ projection_dim (`int`, *optional*, defaults to 512):

+ Dimentionality of text and vision projection layers.

+ prompt_layers (`int`, *optional*, defaults to 2):

+ Number of layers in the video specific prompt generator.

+ prompt_alpha (`float`, *optional*, defaults to 0.1):

+ Alpha value to use in the video specific prompt generator.

+ prompt_hidden_act (`str` or `function`, *optional*, defaults to `"quick_gelu"`):

+ The non-linear activation function (function or string) in the video specific prompt generator. If string,

+ `"gelu"`, `"relu"`, `"selu"` and `"gelu_new"` ``"quick_gelu"` are supported.

+ prompt_num_attention_heads (`int`, *optional*, defaults to 8):

+ Number of attention heads in the cross-attention of the video specific prompt generator.

+ prompt_attention_dropout (`float`, *optional*, defaults to 0.0):

+ The dropout probability for the attention layers in the video specific prompt generator.

+ prompt_projection_dropout (`float`, *optional*, defaults to 0.0):

+ The dropout probability for the projection layers in the video specific prompt generator.

+ logit_scale_init_value (`float`, *optional*, defaults to 2.6592):

+ The inital value of the *logit_scale* parameter. Default is used as per the original XCLIP implementation.

+ kwargs (*optional*):

+ Dictionary of keyword arguments.

+ """

+

+ model_type = "xclip"

+ is_composition = True

+

+ def __init__(

+ self,

+ text_config_dict=None,

+ vision_config_dict=None,

+ projection_dim=512,

+ prompt_layers=2,

+ prompt_alpha=0.1,

+ prompt_hidden_act="quick_gelu",

+ prompt_num_attention_heads=8,

+ prompt_attention_dropout=0.0,

+ prompt_projection_dropout=0.0,

+ logit_scale_init_value=2.6592,

+ **kwargs

+ ):

+ super().__init__(text_config_dict=text_config_dict, vision_config_dict=vision_config_dict, **kwargs)

+

+ if text_config_dict is None:

+ text_config_dict = {}

+ logger.info("text_config_dict is None. Initializing the XCLIPTextConfig with default values.")

+

+ if vision_config_dict is None:

+ vision_config_dict = {}

+ logger.info("vision_config_dict is None. initializing the XCLIPVisionConfig with default values.")

+

+ self.text_config = XCLIPTextConfig(**text_config_dict)

+ self.vision_config = XCLIPVisionConfig(**vision_config_dict)

+

+ self.projection_dim = projection_dim

+ self.prompt_layers = prompt_layers

+ self.prompt_alpha = prompt_alpha

+ self.prompt_hidden_act = prompt_hidden_act

+ self.prompt_num_attention_heads = prompt_num_attention_heads

+ self.prompt_attention_dropout = prompt_attention_dropout

+ self.prompt_projection_dropout = prompt_projection_dropout

+ self.logit_scale_init_value = logit_scale_init_value

+ self.initializer_factor = 1.0

+

+ @classmethod

+ def from_text_vision_configs(cls, text_config: XCLIPTextConfig, vision_config: XCLIPVisionConfig, **kwargs):

+ r"""

+ Instantiate a [`XCLIPConfig`] (or a derived class) from xclip text model configuration and xclip vision model

+ configuration.

+

+ Returns:

+ [`XCLIPConfig`]: An instance of a configuration object

+ """

+

+ return cls(text_config_dict=text_config.to_dict(), vision_config_dict=vision_config.to_dict(), **kwargs)

+

+ def to_dict(self):

+ """

+ Serializes this instance to a Python dictionary. Override the default [`~PretrainedConfig.to_dict`].

+

+ Returns:

+ `Dict[str, any]`: Dictionary of all the attributes that make up this configuration instance,

+ """

+ output = copy.deepcopy(self.__dict__)

+ output["text_config"] = self.text_config.to_dict()

+ output["vision_config"] = self.vision_config.to_dict()

+ output["model_type"] = self.__class__.model_type

+ return output

diff --git a/src/transformers/models/x_clip/convert_x_clip_original_pytorch_to_hf.py b/src/transformers/models/x_clip/convert_x_clip_original_pytorch_to_hf.py

new file mode 100644

index 0000000000000..2f5364f440986

--- /dev/null

+++ b/src/transformers/models/x_clip/convert_x_clip_original_pytorch_to_hf.py

@@ -0,0 +1,386 @@

+# coding=utf-8

+# Copyright 2022 The HuggingFace Inc. team. All rights reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+import argparse

+

+import numpy as np

+import torch

+

+import gdown

+from huggingface_hub import hf_hub_download

+from transformers import (

+ CLIPTokenizer,

+ CLIPTokenizerFast,

+ VideoMAEFeatureExtractor,

+ XCLIPConfig,

+ XCLIPModel,

+ XCLIPProcessor,

+ XCLIPTextConfig,

+ XCLIPVisionConfig,

+)

+

+

+def get_xclip_config(model_name, num_frames):

+ text_config = XCLIPTextConfig()

+

+ # derive patch size from model name

+ start_idx = model_name.find("patch")

+ patch_size = int(model_name[start_idx + len("patch") : start_idx + len("patch") + 2])

+ vision_config = XCLIPVisionConfig(patch_size=patch_size, num_frames=num_frames)

+

+ if "large" in model_name:

+ text_config.hidden_size = 768

+ text_config.intermediate_size = 3072

+ text_config.num_attention_heads = 12

+

+ vision_config.hidden_size = 1024

+ vision_config.intermediate_size = 4096

+ vision_config.num_attention_heads = 16

+ vision_config.num_hidden_layers = 24

+ vision_config.mit_hidden_size = 768

+ vision_config.mit_intermediate_size = 3072

+

+ if model_name == "xclip-large-patch14-16-frames":

+ vision_config.image_size = 336

+

+ config = XCLIPConfig.from_text_vision_configs(text_config, vision_config)

+

+ if "large" in model_name:

+ config.projection_dim = 768

+

+ return config

+

+

+def rename_key(name):

+ # text encoder

+ if name == "token_embedding.weight":

+ name = name.replace("token_embedding.weight", "text_model.embeddings.token_embedding.weight")

+ if name == "positional_embedding":

+ name = name.replace("positional_embedding", "text_model.embeddings.position_embedding.weight")

+ if "ln_1" in name:

+ name = name.replace("ln_1", "layer_norm1")

+ if "ln_2" in name:

+ name = name.replace("ln_2", "layer_norm2")

+ if "c_fc" in name:

+ name = name.replace("c_fc", "fc1")

+ if "c_proj" in name:

+ name = name.replace("c_proj", "fc2")

+ if name.startswith("transformer.resblocks"):

+ name = name.replace("transformer.resblocks", "text_model.encoder.layers")

+ if "attn.out_proj" in name and "message" not in name:

+ name = name.replace("attn.out_proj", "self_attn.out_proj")

+ if "ln_final" in name:

+ name = name.replace("ln_final", "text_model.final_layer_norm")

+ # visual encoder

+ if name == "visual.class_embedding":

+ name = name.replace("visual.class_embedding", "vision_model.embeddings.class_embedding")

+ if name == "visual.positional_embedding":

+ name = name.replace("visual.positional_embedding", "vision_model.embeddings.position_embedding.weight")

+ if name.startswith("visual.transformer.resblocks"):

+ name = name.replace("visual.transformer.resblocks", "vision_model.encoder.layers")

+ if "visual.conv1" in name:

+ name = name.replace("visual.conv1", "vision_model.embeddings.patch_embedding")

+ if "visual.ln_pre" in name:

+ name = name.replace("visual.ln_pre", "vision_model.pre_layernorm")

+ if "visual.ln_post" in name:

+ name = name.replace("visual.ln_post", "vision_model.post_layernorm")

+ if "visual.proj" in name:

+ name = name.replace("visual.proj", "visual_projection.weight")

+ if "text_projection" in name:

+ name = name.replace("text_projection", "text_projection.weight")

+ # things on top

+ if "prompts_visual_proj" in name:

+ name = name.replace("prompts_visual_proj", "prompts_visual_projection")

+ if "prompts_visual_ln" in name:

+ name = name.replace("prompts_visual_ln", "prompts_visual_layernorm")

+ # mit

+ if name == "mit.positional_embedding":

+ name = name.replace("positional", "position")

+ if name.startswith("mit.resblocks"):

+ name = name.replace("mit.resblocks", "mit.encoder.layers")

+ # prompts generator

+ if name.startswith("prompts_generator.norm"):

+ name = name.replace("prompts_generator.norm", "prompts_generator.layernorm")

+

+ return name

+

+

+def convert_state_dict(orig_state_dict, config):

+ for key in orig_state_dict.copy().keys():

+ val = orig_state_dict.pop(key)

+

+ if "attn.in_proj" in key:

+ key_split = key.split(".")

+ if key.startswith("visual"):

+ layer_num = key_split[3]

+ dim = config.vision_config.hidden_size

+ if "message_attn" in key:

+ if "weight" in key:

+ orig_state_dict[f"vision_model.encoder.layers.{layer_num}.message_attn.q_proj.weight"] = val[

+ :dim, :

+ ]

+ orig_state_dict[f"vision_model.encoder.layers.{layer_num}.message_attn.k_proj.weight"] = val[

+ dim : dim * 2, :

+ ]

+ orig_state_dict[f"vision_model.encoder.layers.{layer_num}.message_attn.v_proj.weight"] = val[

+ -dim:, :

+ ]

+ else:

+ orig_state_dict[f"vision_model.encoder.layers.{layer_num}.message_attn.q_proj.bias"] = val[

+ :dim

+ ]

+ orig_state_dict[f"vision_model.encoder.layers.{layer_num}.message_attn.k_proj.bias"] = val[

+ dim : dim * 2

+ ]

+ orig_state_dict[f"vision_model.encoder.layers.{layer_num}.message_attn.v_proj.bias"] = val[

+ -dim:

+ ]

+ else:

+ if "weight" in key:

+ orig_state_dict[f"vision_model.encoder.layers.{layer_num}.self_attn.q_proj.weight"] = val[

+ :dim, :

+ ]

+ orig_state_dict[f"vision_model.encoder.layers.{layer_num}.self_attn.k_proj.weight"] = val[

+ dim : dim * 2, :

+ ]

+ orig_state_dict[f"vision_model.encoder.layers.{layer_num}.self_attn.v_proj.weight"] = val[

+ -dim:, :

+ ]

+ else:

+ orig_state_dict[f"vision_model.encoder.layers.{layer_num}.self_attn.q_proj.bias"] = val[:dim]

+ orig_state_dict[f"vision_model.encoder.layers.{layer_num}.self_attn.k_proj.bias"] = val[

+ dim : dim * 2

+ ]

+ orig_state_dict[f"vision_model.encoder.layers.{layer_num}.self_attn.v_proj.bias"] = val[-dim:]

+ elif key.startswith("mit"):

+ layer_num = key_split[2]

+ dim = config.vision_config.mit_hidden_size

+ if "weight" in key:

+ orig_state_dict[f"mit.encoder.layers.{layer_num}.self_attn.q_proj.weight"] = val[:dim, :]

+ orig_state_dict[f"mit.encoder.layers.{layer_num}.self_attn.k_proj.weight"] = val[dim : dim * 2, :]

+ orig_state_dict[f"mit.encoder.layers.{layer_num}.self_attn.v_proj.weight"] = val[-dim:, :]

+ else:

+ orig_state_dict[f"mit.encoder.layers.{layer_num}.self_attn.q_proj.bias"] = val[:dim]

+ orig_state_dict[f"mit.encoder.layers.{layer_num}.self_attn.k_proj.bias"] = val[dim : dim * 2]

+ orig_state_dict[f"mit.encoder.layers.{layer_num}.self_attn.v_proj.bias"] = val[-dim:]

+ else:

+ layer_num = key_split[2]

+ dim = config.text_config.hidden_size

+ if "weight" in key:

+ orig_state_dict[f"text_model.encoder.layers.{layer_num}.self_attn.q_proj.weight"] = val[:dim, :]

+ orig_state_dict[f"text_model.encoder.layers.{layer_num}.self_attn.k_proj.weight"] = val[

+ dim : dim * 2, :

+ ]

+ orig_state_dict[f"text_model.encoder.layers.{layer_num}.self_attn.v_proj.weight"] = val[-dim:, :]

+ else:

+ orig_state_dict[f"text_model.encoder.layers.{layer_num}.self_attn.q_proj.bias"] = val[:dim]

+ orig_state_dict[f"text_model.encoder.layers.{layer_num}.self_attn.k_proj.bias"] = val[

+ dim : dim * 2

+ ]

+ orig_state_dict[f"text_model.encoder.layers.{layer_num}.self_attn.v_proj.bias"] = val[-dim:]

+ else:

+ new_key_name = rename_key(key)

+ if new_key_name in ["visual_projection.weight", "text_projection.weight"]:

+ val = val.T

+ orig_state_dict[new_key_name] = val

+

+ return orig_state_dict

+

+

+def prepare_video(num_frames):

+ if num_frames == 8:

+ filename = "eating_spaghetti_8_frames.npy"

+ elif num_frames == 16:

+ filename = "eating_spaghetti.npy"

+ elif num_frames == 32:

+ filename = "eating_spaghetti_32_frames.npy"

+ file = hf_hub_download(

+ repo_id="datasets/hf-internal-testing/spaghetti-video",

+ filename=filename,

+ )

+ video = np.load(file)

+ return list(video)

+

+

+def convert_xclip_checkpoint(model_name, pytorch_dump_folder_path=None, push_to_hub=False):

+

+ model_to_url = {

+ # fully supervised kinetics-400 checkpoints

+ "xclip-base-patch32": "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/k400_32_8.pth",

+ "xclip-base-patch32-16-frames": (

+ "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/k400_32_16.pth"

+ ),

+ "xclip-base-patch16": "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/k400_16_8.pth",

+ "xclip-base-patch16-16-frames": (

+ "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/k400_16_16.pth"

+ ),

+ "xclip-large-patch14": "https://drive.google.com/u/0/uc?id=1NUOImq0o5DlQTST17iIP3vG7DgmHQuCx&export=download&confirm=t&uuid=b26caedc-88e2-473e-830a-9d158b653cdb",

+ "xclip-large-patch14-16-frames": "https://drive.google.com/u/0/uc?id=1FOYgnJc097OJ4lGwtRCCydQyVPJEOH7d&export=download&confirm=t&uuid=538fa810-e671-4050-b385-9a623f89804f",

+ # fully supervised kinetics-600 checkpoints

+ "xclip-base-patch16-kinetics-600": (

+ "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/k600_16_8.pth"

+ ),

+ "xclip-base-patch16-kinetics-600-16-frames": (

+ "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/k600_16_16.pth"

+ ),

+ "xclip-large-patch14-kinetics-600": "https://drive.google.com/u/0/uc?id=1FV8C1INuM91sLAN4ImjzePLIlpMSihwV&export=download&confirm=t&uuid=141d4977-4a65-44ae-864f-4b0c19f838be",

+ # few shot

+ "xclip-base-patch16-hmdb-2-shot": (

+ "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/few_hmdb_2.pth"

+ ),

+ "xclip-base-patch16-hmdb-4-shot": (

+ "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/few_hmdb_4.pth"

+ ),

+ "xclip-base-patch16-hmdb-8-shot": (

+ "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/few_hmdb_8.pth"

+ ),

+ "xclip-base-patch16-hmdb-16-shot": (

+ "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/few_hmdb_16.pth"

+ ),

+ "xclip-base-patch16-ucf-2-shot": (

+ "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/few_ucf_2.pth"

+ ),

+ "xclip-base-patch16-ucf-4-shot": (

+ "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/few_ucf_4.pth"

+ ),

+ "xclip-base-patch16-ucf-8-shot": (

+ "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/few_ucf_8.pth"

+ ),

+ "xclip-base-patch16-ucf-16-shot": (

+ "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/few_ucf_16.pth"

+ ),

+ # zero shot

+ "xclip-base-patch16-zero-shot": "https://github.com/nbl97/X-CLIP_Model_Zoo/releases/download/v1.0/zero.pth",

+ }

+

+ checkpoint_url = model_to_url[model_name]

+ num_frames = 8

+ if "16-frames" in model_name:

+ num_frames = 16

+ elif "shot" in model_name:

+ num_frames = 32

+

+ config = get_xclip_config(model_name, num_frames)

+ model = XCLIPModel(config)

+ model.eval()

+

+ if "drive" in checkpoint_url:

+ output = "pytorch_model.bin"

+ gdown.cached_download(checkpoint_url, output, quiet=False)

+ state_dict = torch.load(output, map_location="cpu")["model"]

+ else:

+ state_dict = torch.hub.load_state_dict_from_url(checkpoint_url)["model"]

+

+ state_dict = convert_state_dict(state_dict, config)

+

+ model = XCLIPModel(config)

+ missing_keys, unexpected_keys = model.load_state_dict(state_dict, strict=False)

+ assert missing_keys == ["text_model.embeddings.position_ids", "vision_model.embeddings.position_ids"]

+ model.eval()

+

+ size = 336 if model_name == "xclip-large-patch14-16-frames" else 224

+ feature_extractor = VideoMAEFeatureExtractor(size=size)

+ slow_tokenizer = CLIPTokenizer.from_pretrained("openai/clip-vit-base-patch32")

+ fast_tokenizer = CLIPTokenizerFast.from_pretrained("openai/clip-vit-base-patch32")

+ processor = XCLIPProcessor(feature_extractor=feature_extractor, tokenizer=fast_tokenizer)

+

+ video = prepare_video(num_frames)

+ inputs = processor(

+ text=["playing sports", "eating spaghetti", "go shopping"], videos=video, return_tensors="pt", padding=True

+ )

+

+ print("Shape of pixel values:", inputs.pixel_values.shape)

+

+ with torch.no_grad():

+ outputs = model(**inputs)

+

+ # Verify outputs

+ logits_per_video = outputs.logits_per_video

+ probs = logits_per_video.softmax(dim=1)

+ print("Probs:", probs)

+ # kinetics-400

+ if model_name == "xclip-base-patch32":

+ expected_probs = torch.tensor([[0.0019, 0.9951, 0.0030]])

+ elif model_name == "xclip-base-patch32-16-frames":

+ expected_probs = torch.tensor([[7.0999e-04, 9.9883e-01, 4.5580e-04]])

+ elif model_name == "xclip-base-patch16":

+ expected_probs = torch.tensor([[0.0083, 0.9681, 0.0236]])

+ elif model_name == "xclip-base-patch16-16-frames":

+ expected_probs = torch.tensor([[7.6937e-04, 9.9728e-01, 1.9473e-03]])

+ elif model_name == "xclip-large-patch14":

+ expected_probs = torch.tensor([[0.0062, 0.9864, 0.0075]])

+ elif model_name == "xclip-large-patch14-16-frames":

+ expected_probs = torch.tensor([[3.3877e-04, 9.9937e-01, 2.8888e-04]])

+ # kinetics-600

+ elif model_name == "xclip-base-patch16-kinetics-600":

+ expected_probs = torch.tensor([[0.0555, 0.8914, 0.0531]])

+ elif model_name == "xclip-base-patch16-kinetics-600-16-frames":

+ expected_probs = torch.tensor([[3.8554e-04, 9.9929e-01, 3.2754e-04]])

+ elif model_name == "xclip-large-patch14-kinetics-600":

+ expected_probs = torch.tensor([[0.0036, 0.9920, 0.0045]])

+ # few shot

+ elif model_name == "xclip-base-patch16-hmdb-2-shot":

+ expected_probs = torch.tensor([[7.1890e-06, 9.9994e-01, 5.6559e-05]])

+ elif model_name == "xclip-base-patch16-hmdb-4-shot":

+ expected_probs = torch.tensor([[1.0320e-05, 9.9993e-01, 6.2435e-05]])

+ elif model_name == "xclip-base-patch16-hmdb-8-shot":

+ expected_probs = torch.tensor([[4.1377e-06, 9.9990e-01, 9.8386e-05]])

+ elif model_name == "xclip-base-patch16-hmdb-16-shot":

+ expected_probs = torch.tensor([[4.1347e-05, 9.9962e-01, 3.3411e-04]])

+ elif model_name == "xclip-base-patch16-ucf-2-shot":

+ expected_probs = torch.tensor([[8.5857e-05, 9.9928e-01, 6.3291e-04]])

+ elif model_name == "xclip-base-patch16-ucf-4-shot":

+ expected_probs = torch.tensor([[8.5857e-05, 9.9928e-01, 6.3291e-04]])

+ elif model_name == "xclip-base-patch16-ucf-8-shot":

+ expected_probs = torch.tensor([[0.0027, 0.9904, 0.0070]])

+ elif model_name == "xclip-base-patch16-ucf-16-shot":

+ expected_probs = torch.tensor([[9.8219e-04, 9.9593e-01, 3.0863e-03]])

+ # zero shot

+ elif model_name == "xclip-base-patch16-zero-shot":

+ expected_probs = torch.tensor([[3.5082e-04, 9.9785e-01, 1.7966e-03]])

+ else:

+ raise ValueError(f"Model name {model_name} not supported")

+ assert torch.allclose(probs, expected_probs, atol=1e-3)

+ print("Looks ok!")

+

+ if pytorch_dump_folder_path is not None:

+ print(f"Saving model {model_name} to {pytorch_dump_folder_path}")

+ model.save_pretrained(pytorch_dump_folder_path)

+

+ if push_to_hub:

+ print("Pushing model, processor and slow tokenizer files to the hub...")

+ model.push_to_hub(model_name, organization="nielsr")

+ processor.push_to_hub(model_name, organization="nielsr")

+ slow_tokenizer.push_to_hub(model_name, organization="nielsr")

+

+

+if __name__ == "__main__":

+ parser = argparse.ArgumentParser()

+ # Required parameters

+ parser.add_argument(

+ "--model_name",

+ default="xclip-base-patch32",

+ type=str,

+ help="Name of the model.",

+ )

+ parser.add_argument(

+ "--pytorch_dump_folder_path", default=None, type=str, help="Path to the output PyTorch model directory."

+ )

+ parser.add_argument(

+ "--push_to_hub", action="store_true", help="Whether or not to push the converted model to the 🤗 hub."

+ )

+

+ args = parser.parse_args()

+ convert_xclip_checkpoint(args.model_name, args.pytorch_dump_folder_path, args.push_to_hub)

diff --git a/src/transformers/models/x_clip/modeling_x_clip.py b/src/transformers/models/x_clip/modeling_x_clip.py

new file mode 100644

index 0000000000000..00ae9d720602a

--- /dev/null

+++ b/src/transformers/models/x_clip/modeling_x_clip.py

@@ -0,0 +1,1497 @@

+# coding=utf-8

+# Copyright 2022 Microsoft Research and The HuggingFace Team. All rights reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+""" PyTorch X-CLIP model."""

+

+

+from copy import copy

+from dataclasses import dataclass

+from typing import Any, Optional, Tuple, Union

+

+import torch

+import torch.utils.checkpoint

+from torch import nn

+

+from ...activations import ACT2FN

+from ...modeling_outputs import BaseModelOutput, BaseModelOutputWithPooling

+from ...modeling_utils import PreTrainedModel

+from ...utils import (

+ ModelOutput,

+ add_start_docstrings,

+ add_start_docstrings_to_model_forward,

+ logging,

+ replace_return_docstrings,

+)

+from .configuration_x_clip import XCLIPConfig, XCLIPTextConfig, XCLIPVisionConfig

+

+

+logger = logging.get_logger(__name__)

+

+_CHECKPOINT_FOR_DOC = "microsoft/xclip-base-patch32"

+

+XCLIP_PRETRAINED_MODEL_ARCHIVE_LIST = [

+ "microsoft/xclip-base-patch32",

+ # See all X-CLIP models at https://huggingface.co/models?filter=x-clip

+]

+

+

+# Copied from transformers.models.bart.modeling_bart._expand_mask

+def _expand_mask(mask: torch.Tensor, dtype: torch.dtype, tgt_len: Optional[int] = None):

+ """

+ Expands attention_mask from `[bsz, seq_len]` to `[bsz, 1, tgt_seq_len, src_seq_len]`.

+ """

+ bsz, src_len = mask.size()

+ tgt_len = tgt_len if tgt_len is not None else src_len

+

+ expanded_mask = mask[:, None, None, :].expand(bsz, 1, tgt_len, src_len).to(dtype)

+

+ inverted_mask = 1.0 - expanded_mask

+

+ return inverted_mask.masked_fill(inverted_mask.to(torch.bool), torch.finfo(dtype).min)

+

+

+# contrastive loss function, adapted from

+# https://sachinruk.github.io/blog/pytorch/pytorch%20lightning/loss%20function/gpu/2021/03/07/clip.html

+def contrastive_loss(logits: torch.Tensor) -> torch.Tensor:

+ return nn.functional.cross_entropy(logits, torch.arange(len(logits), device=logits.device))

+

+

+# Copied from transformers.models.clip.modeling_clip.clip_loss with clip->x_clip

+def x_clip_loss(similarity: torch.Tensor) -> torch.Tensor:

+ caption_loss = contrastive_loss(similarity)

+ image_loss = contrastive_loss(similarity.t())

+ return (caption_loss + image_loss) / 2.0

+

+

+@dataclass

+class XCLIPOutput(ModelOutput):

+ """

+ Args:

+ loss (`torch.FloatTensor` of shape `(1,)`, *optional*, returned when `return_loss` is `True`):

+ Contrastive loss for video-text similarity.

+ logits_per_video (`torch.FloatTensor` of shape `(video_batch_size, text_batch_size)`):

+ The scaled dot product scores between `video_embeds` and `text_embeds`. This represents the video-text

+ similarity scores.

+ logits_per_text (`torch.FloatTensor` of shape `(text_batch_size, video_batch_size)`):

+ The scaled dot product scores between `text_embeds` and `video_embeds`. This represents the text-video

+ similarity scores.

+ text_embeds(`torch.FloatTensor` of shape `(batch_size, output_dim`):

+ The text embeddings obtained by applying the projection layer to the pooled output of [`XCLIPTextModel`].

+ video_embeds(`torch.FloatTensor` of shape `(batch_size, output_dim`):

+ The video embeddings obtained by applying the projection layer to the pooled output of

+ [`XCLIPVisionModel`].

+ text_model_output (`BaseModelOutputWithPooling`):

+ The output of the [`XCLIPTextModel`].

+ vision_model_output (`BaseModelOutputWithPooling`):

+ The output of the [`XCLIPVisionModel`].

+ mit_output (`BaseModelOutputWithPooling`):

+ The output of `XCLIPMultiframeIntegrationTransformer` (MIT for short).

+ """

+

+ loss: Optional[torch.FloatTensor] = None

+ logits_per_video: torch.FloatTensor = None

+ logits_per_text: torch.FloatTensor = None

+ text_embeds: torch.FloatTensor = None

+ video_embeds: torch.FloatTensor = None

+ text_model_output: BaseModelOutputWithPooling = None

+ vision_model_output: BaseModelOutputWithPooling = None

+ mit_output: BaseModelOutputWithPooling = None

+

+ def to_tuple(self) -> Tuple[Any]:

+ return tuple(

+ self[k]

+ if k not in ["text_model_output", "vision_model_output", "mit_output"]

+ else getattr(self, k).to_tuple()

+ for k in self.keys()

+ )

+

+

+# Copied from transformers.models.clip.modeling_clip.CLIPVisionEmbeddings with CLIP->XCLIP

+class XCLIPVisionEmbeddings(nn.Module):

+ def __init__(self, config: XCLIPVisionConfig):

+ super().__init__()

+ self.config = config

+ self.embed_dim = config.hidden_size

+ self.image_size = config.image_size

+ self.patch_size = config.patch_size

+

+ self.class_embedding = nn.Parameter(torch.randn(self.embed_dim))

+

+ self.patch_embedding = nn.Conv2d(

+ in_channels=3, out_channels=self.embed_dim, kernel_size=self.patch_size, stride=self.patch_size, bias=False

+ )

+

+ self.num_patches = (self.image_size // self.patch_size) ** 2

+ self.num_positions = self.num_patches + 1

+ self.position_embedding = nn.Embedding(self.num_positions, self.embed_dim)

+ self.register_buffer("position_ids", torch.arange(self.num_positions).expand((1, -1)))

+

+ def forward(self, pixel_values: torch.FloatTensor) -> torch.Tensor:

+ batch_size = pixel_values.shape[0]

+ patch_embeds = self.patch_embedding(pixel_values) # shape = [*, width, grid, grid]

+ patch_embeds = patch_embeds.flatten(2).transpose(1, 2)

+

+ class_embeds = self.class_embedding.expand(batch_size, 1, -1)

+ embeddings = torch.cat([class_embeds, patch_embeds], dim=1)

+ embeddings = embeddings + self.position_embedding(self.position_ids)

+ return embeddings

+

+

+# Copied from transformers.models.clip.modeling_clip.CLIPTextEmbeddings with CLIP->XCLIP

+class XCLIPTextEmbeddings(nn.Module):

+ def __init__(self, config: XCLIPTextConfig):

+ super().__init__()

+ embed_dim = config.hidden_size

+

+ self.token_embedding = nn.Embedding(config.vocab_size, embed_dim)

+ self.position_embedding = nn.Embedding(config.max_position_embeddings, embed_dim)

+

+ # position_ids (1, len position emb) is contiguous in memory and exported when serialized

+ self.register_buffer("position_ids", torch.arange(config.max_position_embeddings).expand((1, -1)))

+

+ def forward(

+ self,

+ input_ids: Optional[torch.LongTensor] = None,

+ position_ids: Optional[torch.LongTensor] = None,

+ inputs_embeds: Optional[torch.FloatTensor] = None,

+ ) -> torch.Tensor:

+ seq_length = input_ids.shape[-1] if input_ids is not None else inputs_embeds.shape[-2]

+

+ if position_ids is None:

+ position_ids = self.position_ids[:, :seq_length]

+

+ if inputs_embeds is None:

+ inputs_embeds = self.token_embedding(input_ids)

+

+ position_embeddings = self.position_embedding(position_ids)

+ embeddings = inputs_embeds + position_embeddings

+

+ return embeddings

+

+

+# Copied from transformers.models.clip.modeling_clip.CLIPAttention with CLIP->XCLIP

+class XCLIPAttention(nn.Module):

+ """Multi-headed attention from 'Attention Is All You Need' paper"""

+

+ def __init__(self, config):

+ super().__init__()

+ self.config = config

+ self.embed_dim = config.hidden_size

+ self.num_heads = config.num_attention_heads

+ self.head_dim = self.embed_dim // self.num_heads

+ if self.head_dim * self.num_heads != self.embed_dim:

+ raise ValueError(

+ f"embed_dim must be divisible by num_heads (got `embed_dim`: {self.embed_dim} and `num_heads`:"

+ f" {self.num_heads})."

+ )

+ self.scale = self.head_dim**-0.5

+ self.dropout = config.attention_dropout

+

+ self.k_proj = nn.Linear(self.embed_dim, self.embed_dim)

+ self.v_proj = nn.Linear(self.embed_dim, self.embed_dim)

+ self.q_proj = nn.Linear(self.embed_dim, self.embed_dim)

+ self.out_proj = nn.Linear(self.embed_dim, self.embed_dim)

+

+ def _shape(self, tensor: torch.Tensor, seq_len: int, bsz: int):

+ return tensor.view(bsz, seq_len, self.num_heads, self.head_dim).transpose(1, 2).contiguous()

+

+ def forward(

+ self,

+ hidden_states: torch.Tensor,

+ attention_mask: Optional[torch.Tensor] = None,

+ causal_attention_mask: Optional[torch.Tensor] = None,

+ output_attentions: Optional[bool] = False,

+ ) -> Tuple[torch.Tensor, Optional[torch.Tensor], Optional[Tuple[torch.Tensor]]]:

+ """Input shape: Batch x Time x Channel"""

+

+ bsz, tgt_len, embed_dim = hidden_states.size()

+

+ # get query proj

+ query_states = self.q_proj(hidden_states) * self.scale

+ key_states = self._shape(self.k_proj(hidden_states), -1, bsz)

+ value_states = self._shape(self.v_proj(hidden_states), -1, bsz)

+

+ proj_shape = (bsz * self.num_heads, -1, self.head_dim)

+ query_states = self._shape(query_states, tgt_len, bsz).view(*proj_shape)

+ key_states = key_states.view(*proj_shape)

+ value_states = value_states.view(*proj_shape)

+

+ src_len = key_states.size(1)

+ attn_weights = torch.bmm(query_states, key_states.transpose(1, 2))

+

+ if attn_weights.size() != (bsz * self.num_heads, tgt_len, src_len):

+ raise ValueError(

+ f"Attention weights should be of size {(bsz * self.num_heads, tgt_len, src_len)}, but is"

+ f" {attn_weights.size()}"

+ )

+

+ # apply the causal_attention_mask first

+ if causal_attention_mask is not None:

+ if causal_attention_mask.size() != (bsz, 1, tgt_len, src_len):

+ raise ValueError(

+ f"Attention mask should be of size {(bsz, 1, tgt_len, src_len)}, but is"

+ f" {causal_attention_mask.size()}"

+ )

+ attn_weights = attn_weights.view(bsz, self.num_heads, tgt_len, src_len) + causal_attention_mask

+ attn_weights = attn_weights.view(bsz * self.num_heads, tgt_len, src_len)

+

+ if attention_mask is not None:

+ if attention_mask.size() != (bsz, 1, tgt_len, src_len):

+ raise ValueError(

+ f"Attention mask should be of size {(bsz, 1, tgt_len, src_len)}, but is {attention_mask.size()}"

+ )

+ attn_weights = attn_weights.view(bsz, self.num_heads, tgt_len, src_len) + attention_mask

+ attn_weights = attn_weights.view(bsz * self.num_heads, tgt_len, src_len)

+

+ attn_weights = nn.functional.softmax(attn_weights, dim=-1)

+

+ if output_attentions:

+ # this operation is a bit akward, but it's required to

+ # make sure that attn_weights keeps its gradient.

+ # In order to do so, attn_weights have to reshaped

+ # twice and have to be reused in the following

+ attn_weights_reshaped = attn_weights.view(bsz, self.num_heads, tgt_len, src_len)

+ attn_weights = attn_weights_reshaped.view(bsz * self.num_heads, tgt_len, src_len)

+ else:

+ attn_weights_reshaped = None

+

+ attn_probs = nn.functional.dropout(attn_weights, p=self.dropout, training=self.training)

+

+ attn_output = torch.bmm(attn_probs, value_states)

+

+ if attn_output.size() != (bsz * self.num_heads, tgt_len, self.head_dim):

+ raise ValueError(

+ f"`attn_output` should be of size {(bsz, self.num_heads, tgt_len, self.head_dim)}, but is"

+ f" {attn_output.size()}"

+ )

+

+ attn_output = attn_output.view(bsz, self.num_heads, tgt_len, self.head_dim)

+ attn_output = attn_output.transpose(1, 2)

+ attn_output = attn_output.reshape(bsz, tgt_len, embed_dim)

+

+ attn_output = self.out_proj(attn_output)

+

+ return attn_output, attn_weights_reshaped

+

+

+# Copied from transformers.models.clip.modeling_clip.CLIPMLP with CLIP->XCLIP

+class XCLIPMLP(nn.Module):

+ def __init__(self, config):

+ super().__init__()

+ self.config = config

+ self.activation_fn = ACT2FN[config.hidden_act]

+ self.fc1 = nn.Linear(config.hidden_size, config.intermediate_size)