accumulate_grad_batches seems not working #12166

Replies: 2 comments 3 replies

-

|

@amejri Seems like you're using an old version of PyTorch Lightning (<1.5.0 because the trainer flag |

Beta Was this translation helpful? Give feedback.

-

|

hey @amejri !

can you share more on this? how are you evaluating this conclusion? |

Beta Was this translation helpful? Give feedback.

-

Hi guys,

I'm trying to train a model with accumulating several batches for gradient. For that, I specified it in the trainer as follow :

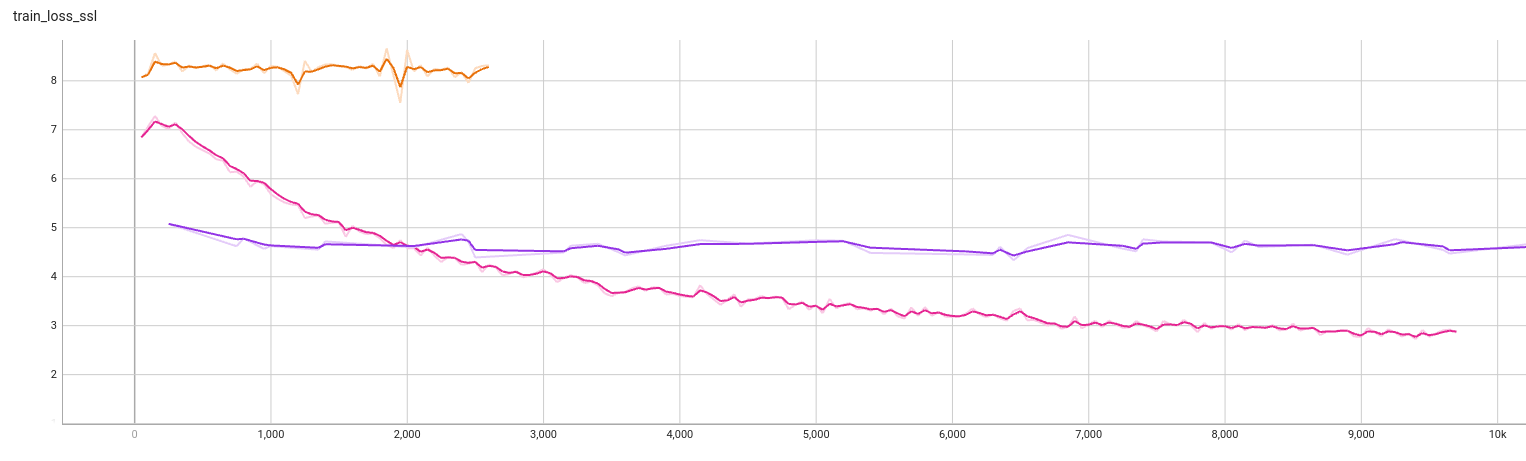

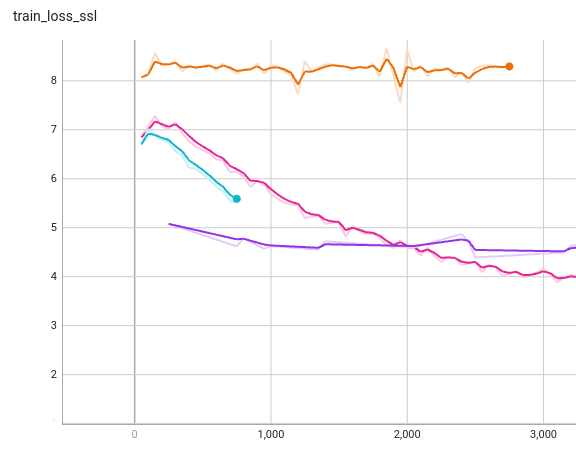

but it seems like nothing changes. and the training evolution is the same as without adding accumulate_grad_batches parameter.

Do you have any idea how to solve it ?

With kind regards,

Beta Was this translation helpful? Give feedback.

All reactions